With the latest Capture One, you get an incredible level of control over your images. I’ve already been a fan of the layers and masks in Capture One for a while, but with the new update, the software can create those for you. This, and so much more, is why you should try out the new Capture One.

I don’t often get excited about updating software. As such, I was rocking Capture One 21 for the longest time, and only began to regularly update it recently when version 23 came out. While there is little incentive for me to update, as I have every feature I need in version 21 already. However, that was until the new version was rolled out, and I tried Capture One 23, I realized I was missing out, and did a full review of the software and how much it has changed.

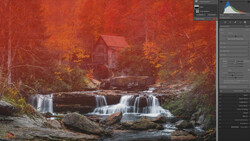

AI Masking and More

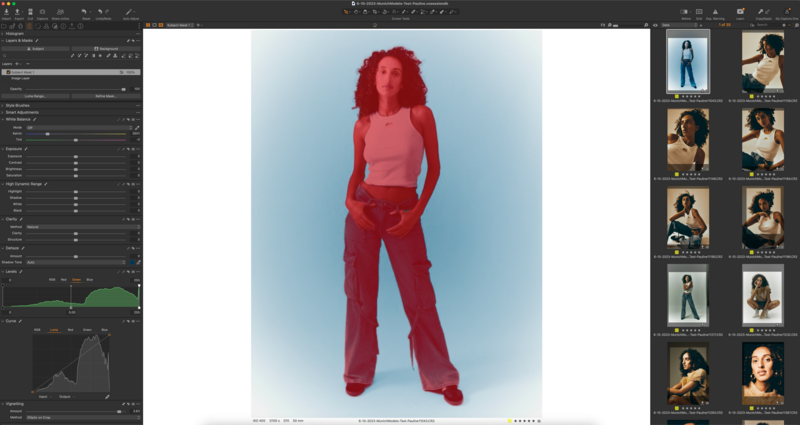

Capture One is now on par with its competitors by introducing new AI masking features. While it's true that Lightroom has had similar capabilities for a while, Capture One takes it a step further. It can mask not just the subject or background, but individual components of the image. For instance, changing the color of a garment or brightening the makeup on a model is now possible with Capture One AI masks. This feature is a significant time-saver for editing portrait and fashion work. I find the AI masking feature particularly useful for color grading and bringing out details in specific parts of the image. An exciting future development I anticipate is AI masks being automatically generated for each capture during tethering, providing an image that is close to final right away.

Re-Tethering

Another great feature in the new Capture One update is the re-tether functionality. While I ensure I have plenty of tether cables on set, sometimes even a 10-meter cable isn’t enough. The re-tether feature lets you disconnect the camera, shoot, then reconnect, using the memory card as temporary storage. The images transfer to the catalog as soon as you reconnect.

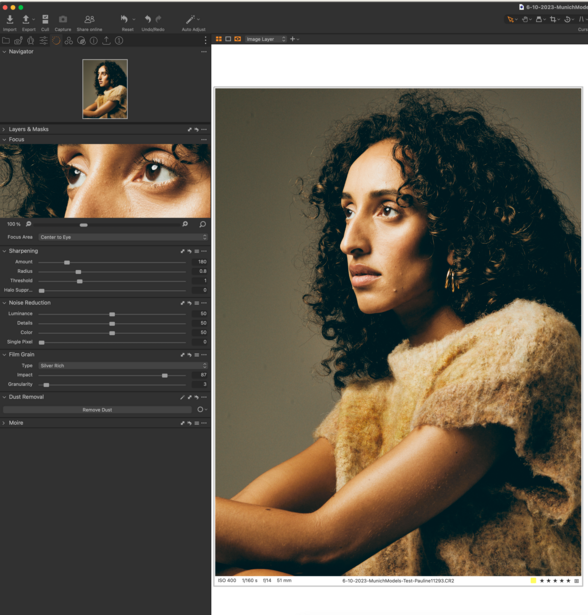

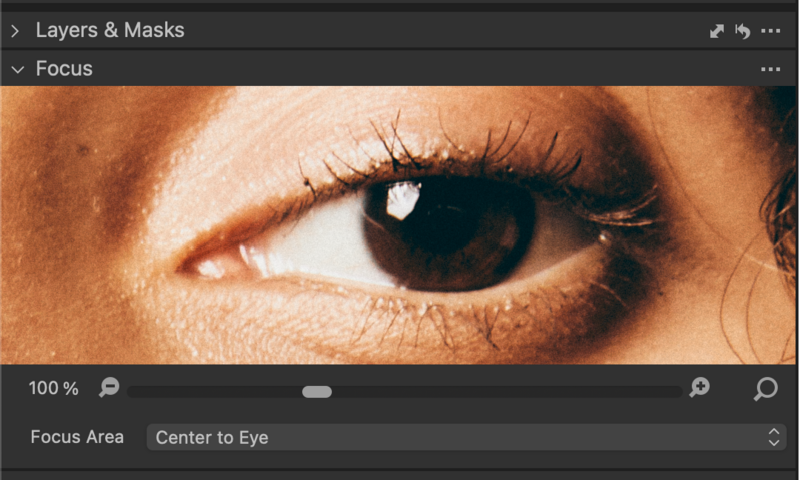

Snap To Eye

A feature I find useful is the ability to snap to eye in the focus preview. This speeds up selection, as I can immediately see the focus of the image. Before, I would have to zoom in or manually select the eye with the loupe tool. This feature saves time by eliminating a repetitive task.

Wishes

Of course, there are features I'd love to see in future updates. One is the ability to use the session name in the import window for file naming. When shooting in busy street locations, like Barcelona or Paris, I often hesitate to tether due to safety concerns or weather conditions. Being able to match image names with session names without manual typing would be helpful. Another addition would be longer-lasting Live sessions with client marks and comments saved even after the session expires. Lastly, the ability to add a vignette to the background only, without affecting the subject, would be a valuable feature, especially for a look I've been experimenting with.

Closing Thoughts

As much as I love Capture One, I want to be out shooting and not editing, which is why I welcome every update that allows saving time spent in Capture One. For example, this week I spent an average of 7 hours in Capture One working on various files, a value only surpassed by Safari by some minutes. Nonetheless, there is lots to love about the new update, and I recommend Capture One to any photographer that shoots portrait or fashion work.

11 Comments

>For instance, changing the color of a garment or brightening the makeup on a model is now possible with Capture One AI masks.

That's.. also possible with lightroom. It's under select person and includes clothing, facial skin, body, lips, irises, hair, etc. depending on how granular you want to get. You can also "select object" for non-person item selections.

As I wrote below on a comment. This is definitely possible in LR/ACR. But I find the way it works in C1P more intuitive with just hovering over certain parts of the image.

But I still hope they include the predetermined parts in C1P like it is in LR.

Can you batch things besides subject and background masks? Like if you select a coat in C1 in one photo, can you batch that ai mask to the rest of the photos with that same coat?

Of course both programs are lagging in current AI tech. The cutting edge right now is aftershoot, which will literally determine your editing style and edit for you, cull for you, crop for you.. lightroom can't even batch auto crop. And even generative AI in PS isn't the best. Compared to DALL-E 3 it's a toy.

I have not tried Aftershoot yet but I rarely have tons of photos to batch. I do invidual retouching mostly in small amounts of photos. But I heard good things.

But both in LR and C1P there is a lot of stuff which could still be better. Gen-AI in PS is good for small things like fixing "holes" in hair, remove skin wrinkles or extend a grey background.

For everything else I find the resolution too bad and the stuff it generates too bad. But it still works on Firefly v01. Firefly v02 in the browser is much better but is still in Beta in PS.

Of course Midjourney and Dall-E3 is much better for Gen-AI. But we are in "infant" stage with all of AI. It gets better from week to week.

The latest Google Gemini presentation (which is not Gen-Ai) is pretty impressive. Google it.

There's a few differences with capture one's approach to masking and the snap to eye thing is actually very handy and something Adobe can replicate and should pretty easily.

The only other difference I saw of note is that you could dynamically select things on the image itself and make a mask out of it. If it worked as simply as it did in the demo video then that's also a very good thing.

That said, to present that these differences alone makes you shift your entire software platform is actually pretty nonsensical. It would have been helpful to differentiate if you're talking about studio photographer is versus other types of images like portraits and such, but maybe that's beyond the scope of this article. It does warrant some clarification though.

Lastly again it should be noted that these are just differences and approach and Adobe can clone them at any time. They are not game changers by any means.

These recent new features sound interesting but also rather niche. My impression is that Capture One unfortunately has fallen behind in core functionality. What it badly needs is a top notch content-aware fill as well as similar and related functionality offered by Luminar Neo, Affinity Photo and, yes, Adobe.

But to be fair:

content-aware fill is only available in Adobe Photoshop and not in Adobe Lightroom. And C1P is mostly comparable only to LR.

So even if you use C1P you could still use Adobe Photoshop (although you would have LR probably included) or Affinity Photo (or Luminar Neo).

I frequently use masks for what I do, mainly in photoshop as I find the tools in Lightroom just not good enough for accurate editing. I am always disappointed in the accuracy of the masks it produces regardless of which masking approach I use. I always have to go in and manually fix the mask. Often there does not appear to be any rhyme or reason why one part of the image is reasonably well masked while another part is as messy as you like. I find the masks the auto functions produce are just not accurate enough. Do other people have these problems? And is Capture One and its masking tools superior to that of Adobe’s? I would like to hear about other peoples masking experiences. Being an Adobe user for over 25 years! I’ve not really had a look at alternatives!

I would say – having tried both – the masking "quality" is pretty similar. Both are not a 100% accurate. For detailed masking I still do it myself or outsource it to external vendors. If you need it pixelperfect for high end retouching. For weddings or events – if accuracy is not the first priority – I find them both quite ok.

What I find superior in C1P is the speed of applying a mask itself. You just hover over certain parts. And the quality of the mask seems to increase when you zoom into the image.

I find the LR method rather cumbersome and uninuitive.

The only advantage in LR and ACR is that that certain human features are predetermined (such as "eyes", "skin" etc). But I would guess C1P will add that in the future. But it is just a guess.

In a perfect world – in either program – you would just hover over certain parts of the image and create a mask AND could select predetermined parts (like eyes).

Yes, I use clipping path all the time for many things but I have a lot of years of experience with paths and it's second nature. It's much faster than playing around and adding or removing parts that shouldn't be selected. For stuff where precision doesn't matter then letting the app select can be fine but it always need refining. I believe most people have no idea the path tool even exist and that's been my problem with Photoshop upgrades, selling the dream tool, not reality. It's getting better, but it's all about decision regarding what will be best for the specific job. I don't use light room and I see a lot of people limiting themselves with it because they either assume PS is the same or they think PS is too hard to learn.

That snap to eye is pretty useful.

As far as masking, it's an ok first step, but, still lacking. It's fine if you were editing 1 image 1 look. But, if you had a series (or entire gallery), you would have to manually make the adjustments for each AI layer (subject/background) for each image. You can't copy from image to image(s) and expect the AI layers to adapt to the next image with the adjustments like you can in LR. Though, there's a manual workaround if you were only doing a small number of images.