Artificial intelligence has come into our lives in various ways, and photography is no exception. In fact, did you know that it's already being used in smartphone cameras? But what is the future looking like for merging artificial intelligence and subjective, artistic craftsmanship?

Recently, I came across an article detailing free and publicly available datasets for machine learning, including those of imagery, with a few examples of COIL100, a database containing 100 images of different object that are viewable at every angle in 360 degree rotation, Labelled Faces in the Wild, which is a set containing 13,000 labeled images of human faces to be used for developing applications that involve facial recognition, and Standford Dogs Dataset, which has 20,580 images with 120 different dog breed categories, just to name a few. This got me reading and researching the ways artificial intelligence is already affecting our photography, and how creativity and technology can co-exist to serve one another.

We Already Use Artificial Intelligence

Have you used image-tracking with your digital camera or your smartphone before? For example, tracking moving objects, or even a single object surrounded by others, not losing focus on your chosen subject. Whether it is video or photography that you do, either way this technology is developed to assist us and simplify our workflow by allowing us to direct more of our attention to what's important to us, namely creating meaningful images.

This is just one example, but there is also what Microsoft has called a "drawing bot", which creates images from written descriptions of an object, all the while adding "details to those images that weren’t included the text, indicating that the AI has a little imagination of its own, says Microsoft." But what about a post-processing software that uses machine learning to edit your photographs by analyzing your images, adapting to your style and giving you an edit that the program considers to be the best version of it? Luminar by Skylum gives us another alternative to commonly used editing programs with the additional focus on machine learning.

Even Adobe has placed a lot of time and resources into developing their Adobe Sensei, which "helps you to work better, smarter and faster". Although, that's a very vague description, solid examples include face-aware liquify tool, which allows you to alter "expression or perspective without distortion", improved stock search using search words, such as "depth of field", and morph cut to assist in editing interviews by smoothing out jump cuts. Another useful tool for many photographers is smart tags which in a matter of seconds give metadata for images, after reviewing the content in the photographs.

A similar technology has been created by Excire. This tool acts as a Lightroom plugin to help you search for photographs in several ways. You could be using a reference image, and Excire will find images similar to the selected one, it also allows you to use keywords, such as "beach", and furthermore it allows to search for faces which even goes as far as to narrow the search to a certain age group or gender. It even allows you to find images of people who are smiling.

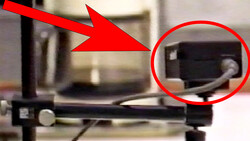

Lastly, another tool I came across is Arsenal, a smart assistant device that uses artificial intelligence to gather data and help you shoot "better". This tool allows you to capture various scenes and overcome difficulties, for example it promises to help you shoot long exposure scenes without the need for ND filters; this is due to Arsenal combining several photos and "averaging the pixel values to generate long exposures". Other options include automatically taking and merging multiple photos to create sharpest possible shots by using your lenses sharpest aperture. This assistant promises to "take better photos in any condition" and is currently available to be pre-ordered.

What Is The Future for Photographers and Artificial Intelligence?

What is our future looking like living, working and creating alongside artificial intelligence? Alex Tsepko, CEO of Skylum Software, has predicted that photography will become more intuitive and all these new tools will allow us to bridge the gap between what our eyes see and what our cameras produce, by using technology that reads every situation and analyzing the treatment it requires to provide us with the best possible final result.

I've only listed a fraction of what artificial intelligence can do to assist us, the research and development is constantly ongoing and the possibilities are endless. But what about our artistic vision? Wil that disappear in the midst of automated processes that assist in composing, taking and editing the photographs? Tsepko realizes this potential worry and notes that "the art will always remain" and whatever advanced technology you may use, it still won't help you become a great photographer. It won't bring out the vision and imagination that you have within yourself, but it will make photography more widely available for people at the beginner's level. Taking away what they may consider the complexities and technicalities of photography, they will and are able to create photographs that mean something to them. After all, as photographer Eric Kim puts it, in the ideal world we should "make technology our slave; not for us to be slave to our technologies".

How do you feel about artificial intelligence and photography? Do you think it has the potential to remove what photography is truly about or is it a helpful tool?

Lead Image by Franki Chamaki via Unsplash, used under Creative Commons.

4 Comments

Art is supposed to be a uniquely human endeavour. Keep the A.I. out of the picture.

But what about focus tracking etc ? :)

An interesting topic for sure. And like you say, AI is already here in various forms and more on the way.

Imagine what the first autofocus users thought when the camera could take over. Is that really AI? We keep shifting the goal posts.

It's not that far off when we'll tell our editing software to remove a person or sharpen that face. Right now, the software only understands things like colour and brightness etc. In future it will recognise things like 'door', 'chair' and 'person'.

Then it'll be 'person sitting or 'person standing'. One day, 'girl holding a cat', and so on. Hours spent clipping a person out of a background will largely disappear.