When talking about the differences between full-frame cameras and crop sensors, one of the biggest arguments in favor of full-frame sensors is the ability to produce images with a shallower depth of field. This was always my understanding of the subject as well. But after watching this video, I have seen the error of my ways. As it turns out, if all the variables are the same and the only thing changing is sensor size, the smaller the sensor, the shallower your depth of field.

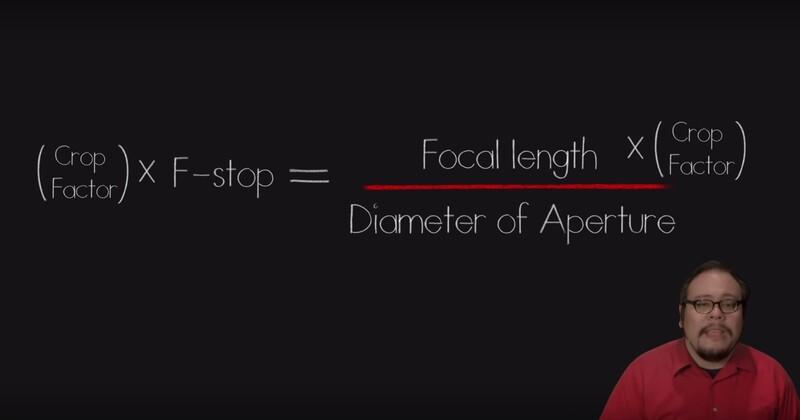

I'm not going to try and explain all the science and math from the video, because the video does a much better job than I could even attempt. But my biggest takeaway from this video was when thinking about a sensor's crop factor and how that’s used to calculate a lens' equivalent focal length. Most people multiply the crop factor of a sensor by the focal length of a lens in order to get the full frame equivalent. The trick though, is that you need to multiply this crop factor by the focal length as well as the aperture.

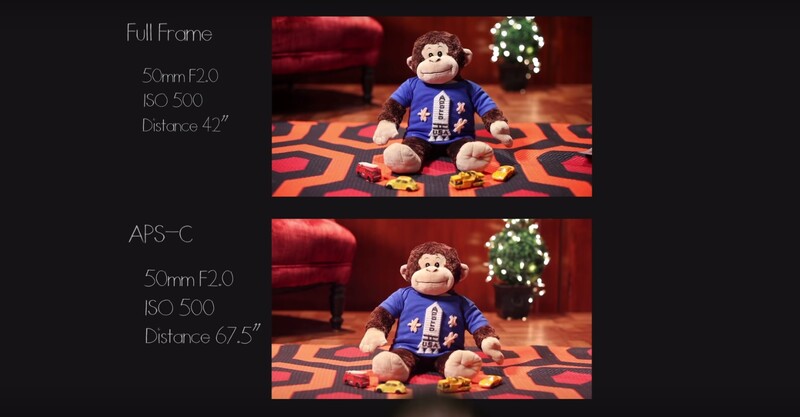

The reason why it seems that full-frame cameras have a shallower depth of field has a lot to do with the focus distance needed in comparison to a crop sensor. The example below shows that in order to get the same frame of view on a crop sensor, you need to increase the distance of the subject. This added distance is what increases the depth of field on the crop sensor.

Who here just had their mind blown?

94 Comments

Anyone who shoots regularly with both should already understand this.

A lot of people might understand it, but not truly get it. I think this video does a good job of presenting all the small details that go into this topic.

Obviously this writer felt someone out there would benefit form this. Not everyone is as knowledgeable as you, clearly.

Clearly.

But I've shot a lot of 35mm, 645, 6x6, and 6x7. Perhaps I take certain things for granted.

So what was the point of your original comment?

That anyone who shoots regularly with both should already understand this.

It appears that's not the case. Even though with digital, you have immediate feedback, and can clearly see how much depth of field you have for a given focal length, aperture, and subject to film (sensor) plane distance.

If other people got something from this, great. It's useful info, but isn't going to change how most people shoot. Even if you didn't understand the concept, you've probably figured out you had to stop down more or less for the desired result.

It's meant to be informational, not change the course of history.

I want to get medium format cameras (6x4.5 and 6x7), I saw the John Hess video over on SLR Lounge. John said that equivalent focal lengths didn't occur until the digital era.

I found a Mamiya lens catalog on the web a while ago for the film era and they listed the 35mm focal lens equivalent in their catalog.

50mm is considered the normal lens for 35mm cameras. 80mm is a normal lens for 6x4.5 and 110mm is a normal lens for 6x7.

Prefers Film: Is this correct?

Something like that. For the RB67, there was no 110. It was 90, 100 (which I have never seen), and the 127. Both the 90 and 127 were super common, used, like a 50 1.8 for 35mm.

I had a 90, 150 soft focus, and 180 for my RB67. I don't recall which lenses came with the Mamiya 645 I borrowed before I bought my RB, but they were all shorter, even for medium format. The local camera store owner loaned me his Hasselblad for a while too. Nearly all the 16x20 prints in my portfolio were made with those three, and I will probably look for a used RB system again one of these days.

I want to get two Mamiya systems, the 645 and the RZ67. Even though each have their different lens family, a 200mm on a 645 has a longer reach than the 67, which is the same with APS-C and 35mm; a 200mm on an APS-C has a longer reach than 35mm.

MF cameras don't exactly excel at long shots. I'd stick with a wider lens for outdoor stuff, and a good portrait lens. That said, you could probably get both sizes, and every possible lens available, for a lot less than the $11k my kit was new. ;)

You are still making an error in your thinking. What he says is true and your conclusion is true as well, but you can not compare a 50mm image on apsc to a 50mm on full frame. That's a different image, which you can see in your sample. Try comparing a 50 on apsc to a 80 on full frame instead :)

Anyway, optical theoretical wise it's all true but there is a reason photographers have lenses with different focal length, and that's where the advantage of full frame regarding shallower dof comes back in again

This is one of the reasons I wished manufacturers would switch to labeling lenses by angle of view (field of view). Focal length isn't nearly as intuitive and leads to these kinds of articles.

You can do that on a point and shoot and fixed lens cameras - but if you sold a lens there's no way of know what the field of view would be unless you know what the sensor size is.

John, the majority of people already buy lenses based on their sensor. Even now people have to be aware if the lens is designed for mirrorless systems. It also wouldn't make sense to purchase heavy full frame glass for a crop sensor. It's always important to know what system the glass is designed for, so it wouldn't cause any further confusion to switch to an angle of view label.

But how does the manufacturer know what sensor you're going to use? What if you own two compatible cameras with two different sensor sizes. The 50mm on one would have the same name as he 80mm on the other one, but if you accidentally switch them you'd get wildly different results.

Naming cameras by field of view really would add more confusion. On top of that a lot of equations like hyper focal distance rely on thing like focal length.

Manufacturers design lenses based on sensor size already. This is why Sigma can easily adapt a single lens design to multiple camera systems. And just because a crop body can mount full frame glass doesn't mean that glass was designed for the crop body. Compatibility is only a convenience of mount type.

Equations can still be made all the same. I'm not arguing the removal of information, but to change its primary identifier to something more concrete.

tl;dr: Telling someone that has no knowledge of cameras that your favorite focal length is 50mm provides no intuitive knowledge to them. However, saying that your favorite field of view is 40 degrees much easier to intuit.

I'm sorry, but i still have to completely disagree with the title of his article. Depth of field is a purely optical characteristic. If i put a 50mm f1.8 lens on my sony A7 and shoot a photo in full frame mode, and then switch it over to crop mode and without moving the camera take another photo, the two photos will have 100% absolutely identical depth of field. For any given lens the depth of field is constant , regardless of sensor size. It;s just a different "crop" of the image projection. You can however say that smaller sensors tend to have denser pixel layouts, and denser pixel layouts will create smaller circles of confusion and a smaller circle of confusion will be less tolerant to the depth of field of a given lens.

Thank you! I actually registered, just to give you a thumbs up!

To you , too, a question, since I think you are misled here. Your statement disagrees with geometrical optics, iff COC has to be taken different (i.e. when mounting it on an APSC camera with the same pixel pitch). Look at the DOF equations of geometrical optics: http://toothwalker.org/optics/dofderivation.html , eq. 13. Your magnification, defined as f/(1-v), f focal length and v object distance doesn't change. As doesn't aperture N. But if you use a different COC C, the numerator changes and therefore DOF, as is reflected in the title. Can you explain why the DOF equation should be wrong in that case? Do you think you need not take a different COC when cropping or mounting on a cropped sensor? Or did you implicitely compare the final image using different output sizes instead of the same output size?

I think you meant to reply to Daniel, not me.

However, nobody here says the math is wrong.

The title is just very misleading because required subject distance has far greater impact on the overall image a smaller sensor with its smaller pixels. (And those smaller pixels are the only reason why DoF is shorter) - But please do read the comments below, as there are many more explaining the subject matter over and over (including John Hess from the Video)

Yes, I did. And still I find the original comment misleading. It was explained by Hess below again. What is missing is, that Daniel Karr doesn't state the output size he is using for comparing images. So his post is as misleading as the title, imo. Only if he compared the cropped image unmagnified to the previous image size, DOF would be the same as magnification would not change.

Yeah, but DoF itself doesn't change since the optics don't change. That's what he states.

Again, the rest is just a definition of what you call "sharp" and that of course is dependent of magnification and viewing distance.

Only since magnification is constant, i.e. looking at a smaller, cropped final print of the FF image. COC is a part of the DOF equation, so changing the output size changes magnification, similarly to what the author wrote below following a comment by you.

Another thumbs up. Thank you for describing this accurately. I was pretty sure my 50mm didn't magically project different light when I switched to a smaller sensor body :)

But in that instance you're changing the resolution. Which of I'm not mistaken, plays into the equation as well.

Sorry Jason, I don't think you really understand the concept of depth of field. Resolution has nothing to do with the equation. Are you saying a 36 megapixel camera has a different depth of field than say a Sony with its 12 megapixel sensor and both are FF?

I think you need to pull this article because it is misleading.

That's a good point. In the explanation in the video where they are talking about the fuzzy circle whatever, and showing how sensor size drops, the depth of field drops because it's effecting more pixels. I took that as resolution was a part of that causation even though they didn't specificly say that... That fact makes it hard to understand how their explanation of individual pixels being effected actually comes into play.

I guess it depends on your strict definition of depth of field. The guy in the video seems to be defining DOF as the DOF that a camera can resolve. As a practical definition of DOF, it's far more useful to think of it as a purely optical phenomenon, otherwise simply downsampling an image in photoshop can "change" the DOF.

There is a lot of misunderstanding happening here. Jason explains things very well, but this is something that takes a lot of thought to get your head around.

You need to be thinking about magnification (this is the key word that has been missed). With a smaller sensor the image is magnified and because of the circle of confusion the image will have less perceived depth of field.

Simply cropping an image in Photoshop will not show any difference, but when you re-size the image and compare the before and after the image you perceived as sharp will look soft on the re-sized image.

The same thing happens with print size. If you take a sharp image printed at 8X10 then print it at 16X20 the 8X10 image will look sharper. Since depth of field as what we find acceptably sharp the 16X20 is no longer acceptably sharp and therefor has a more shallow depth of field. The more you magnify the image (the larger you intend to print) the more accurate you will need to focus for a sharp image. With a smaller sensor you will have to magnify the image even more to get the same print size as the full frame.

When people switch from APSC sensor to full frame they talk about how much better image quality is. On an APSC sensor if you focus accurately, change your focal length (while keeping shutter speed the same), and f-stop there will be little to no difference in image quality.

In reality only one point is sharp, and depth of field is only what we perceive as sharp.

"With a smaller sensor you will have to magnify the image even more to get the same print size as the full frame."

Or not. You're confusing negatives and sensors.

No, I'm not confusing negatives and sensors. I am talking mathematics. Crop factor is not as accurate as magnification. There is a magnification factor of about 1.5, it's that simple.

Actually, Jason is correct. Switching on "crop mode" on those cameras simply crops the photo *in camera*, similar to if you took the photo in Photoshop and cropped the image so that the subject fills more of the frame, giving the illusion of a longer focal length

...all at the expense of overall image resolution.

Felix, different Megapixels on the same SIZE sensor has to do with pixel density.

ie, more pixels per square inch. The sensor size itself stays the same regardless of megapixels, unless of course one is FF and one APS-C. Or even medium format.

Simple explanation is this: Take the FF sensor and decrease the pixel size. Then, according to the concept explained in the video, you have decreased DoF.

But you still have a FF sensor and the same lens. So what just happened?

I agree. I didn't think about that when watching the video. I'll have to reach out the creator and see if they have an explanation.

Only subject distance (focus distance) and aperture have an effect on perceived DoF. That's the look.

The rest is just theory on when to consider a circle small enough to be called sharp. And that again depends on viewing size and distance (and your eyesight of course)

Addition: Lenses and sensors just crop in or out and thereby simply scale the image contents, but they don't change foreground-background ratios (which again is relevant for perceived DoF)

To make your point a reality - "percived" must end on a format or medium of being correct? Like a print perhaps. When you include this into the real world then resolution and pixel size in fact do play a part. Do not try and dismiss it, people used to print before computers.

I didn't.

If you consider print viewing distance, size, resolution and circle of confusion then mathmatically that is exactly what you have done. Again mathmatically is not necessarily completely visible and acceptable focus is somewhat subjective.

Not changing the resolution per se, changing the enlargement ;)

Jason, you're right about changing resolution playing into the equation, and I guess that's kinda my point. Its not so much smaller sensor size that changes apparent depth of field, but smaller pixel size. So a smaller sensor of the same resolution would have a tighter tolerance for depth of field. If you compared a 42MP Full Frame camera with a 16MP APSC camera, the smaller sensor would actually have larger pixels, and that would mean a deeper apparent depth of field(or larger circle of confusion).

It's not the pixel size either - it's the smaller circle of confusion.

A FF 50MP given the same lens will shoot a deeper DOF than a 18MP APS sensor given the exact same print

Yes, but then you will need to amplify the crop image more than the other to produce the same final result, which will also reduce the sharpness and so decrease the depth of field, again on the final image. Nobody looks at the image in the sensor, it's always amplified somehow and the smaller the sensor, the more you have to amplify.

Daniel, while your analogy is accurate, the thing about turning on "crop mode" on an A7, is that it is similar to a *digital* crop -- in that the amount of the sensor used in the resultant photo is decreased, in order to add more "reach" on your lens -- at the expense of resolution.

It's NOT the same as how focal length of the same lens covers the ENTIRE sensor on two different cameras, one full frame and one APS-C.

The distance between a lens element and the sensor is PHYSICALLY DIFFERNET on cameras of varying frame sizes; not true of the Sony A7 when you switch on/off "crop mode."

And so, the article title is actually correct.

Think about it: All else being the same, a 100mm on a full frame becomes a 160mm on a 1.6 crop APS-C. When you *increase* focal length, DOF *decreases* at the same f-stop.

The reason it *appears* shallower on a full-frame -- like in the above example with the plush monkey -- is because you actually need to be PHYSICALLY CLOSER to the subject in order to have it fill the same amount of frame space, thereby decreasing focal distance, which blurs the background more.

Totally agree, read that to late, would have been better to post my answer here than at the end of the thread.

Thank you, Daniel. I just posted (admittedly in a state of slight frustration). Had I read your post first, I would not have needed to. You are correct.

Your statement disagrees with geometrical optics, iff COC has to be taken differnet (i.e. when mounting it on an APSC camera with the same pixel pitch). Look at the DOF equations of geometrical optics: http://toothwalker.org/optics/dofderivation.html , eq. 13. Your magnification, defined as f/(1-v), f focal length and v object distance doesn't change. As doesn't aperture N. But if you use a different COC C, the numerator changes and therefore DOF, as is reflected in the title. Can you explain why the DOF equation should be wrong in that case? Do you think you need not take a different COC when cropping or mounting on a cropped sensor? Or do you compare the final image using different output sizes?

Doesn't a smaller field of view necessitate a shallower depth of field in your example?