Adobe Max is a three-day conference in Los Angeles, CA attended by designers, illustrators, photographers, and social media content creators. Attendees can watch presentations by Adobe representatives and attend educational sessions given by independent creators. This year, Generative AI was mentioned in many presentations. This feature is implemented in unique ways across the programs that make up Adobe’s Creative Cloud, and it often feels to the user that they have more effective communication with programs that have AI enhancement.

I spoke with Deepa Subramaniam, Vice President of Product Marketing, Creative Cloud at Adobe to discuss Adobe’s intention with widespread AI implementation (often referred to as simply Firefly) and how photographers might use this technology to materialize their creative vision. AI can be used to remove unwanted elements or create desired details and scenes not present in the original image capture. The feature has been available for less than a year, meaning there is much to explore about how it might best be utilized in a creative workflow.

The first thing we did was put forward some internal tenets and principles to guide how we explore generative AI and build with it. We were very creator-first from the beginning, being thoughtful about how we want creators to play with this, and how can we support them in learning about this new technology. From day one we were transparent and open, and you can see that in how we brought this innovation to market through open public betas built on dialogue with the community.

Through the open beta process, we have learned about new workflows that we weren't even aware of. It's been amazing to see how creators are using Generative Fill in Photoshop not only for the generated output in production workflows but for ideation as well. It wasn’t clear to us that that was a possibility until this information came to us through that public data.

As photographers, we often meet new technology with a mix of excitement and apprehension. I always wish that I could utilize the latest hardware and software technology, but my clients and competition even, would not be able to.

Change brings innovation and that innovative change powers new creation, new output, and new ideas. It kickstarts the next age of that medium. We are in one of those moments right now with generative AI and photography. Some creators are really ready to dive in and start playing and we want to put that innovation in their hands. Some are more reserved, thinking, okay, I think I need to be exploring this. They understand this is not going away, but are still sort of at the start of their journey of understanding how to fold it into their workflows. And we want to foster that dialogue with those users and help them in their exploratory journey.

I think for many people, AI just seemed to come out of nowhere in the same manner that cryptocurrency and NFTs were unknown one day and seemingly in the public consciousness the next. Photographers were rightly concerned about what AI means for their artistry or their commercial business.

Our philosophy around this has always been to be very creator-centric. So creators should ask whatever questions come to their minds. The technology is there for them to understand and personalize. And it's been a beautiful thing to witness. The public betas have been the forum by which we have a dialogue with our users. We are engaging with the community through these betas to have that conversation. It's not just even about shipping the betas and seeing what happens, it's dialoguing on social media, tons of in-person events, and working closely with creators to understand how they're folding into their workflows. Hearing their questions, answering them, going back, and having discussions internally. It's just been a two-way dialogue, which I think is critical to how this technology is going to innovate and continue to be useful to creators. And honestly, I think that's a real reason why we're getting the momentum and the adoption that we're getting. We announced just yesterday that 3 billion images have been created with Firefly, a billion of that in the last month alone. People are leaning in to explore this technology because there's real usefulness there, and there's just a myriad of ways to use Firefly’s capabilities to create. And so each creator is on their own individual journey to figure out how to pull that into their workflow. And we are here to support that, to learn from that, and to build on top of that.

It’s worth noting also that sometimes photographers might be using AI and not be aware of it because it's so baked into the application. Lightroom itself has a long history of embracing AI, but not yet on the generative Firefly-powered front. There's broad artificial intelligence which Lightroom utilizes, and then there's generative AI, which is sort of a subset of AI built around the creation of new pixels. We've always explored ways to use AI to speed up workflows and just make the act of creation easier. Lightroom has a bunch of new capabilities that are AI-powered. De-noise is one. In the release that we announced yesterday, we have AI powered lens blur that can add a blur effect to an image regardless of the hardware used to create the image. Our philosophy is, let's help you, the photographer, the creator, work better, faster, smarter.

I think the problem for some photographs is the feeling that AI technology will replace them.

Imagine you took a photograph that didn’t come out exactly as you pictured it. The power of Firefly is like, what if it's not exactly what you wanted, and you want to be able to perform a generative edit that transforms the image into exactly what's in your mind's eye? Creators now have the possibility of doing that, whether it's in Express, or whether it's in Photoshop. Firefly is a part of the creative process, not a full replacement of the creative process because that's just not possible. The human has to be driving that creative process. Firefly gives you creative control, and the precision to bring to life exactly what is in your mind's eye.

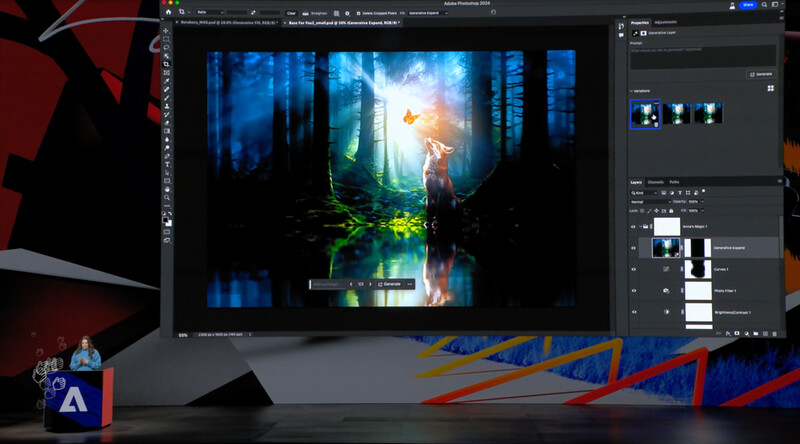

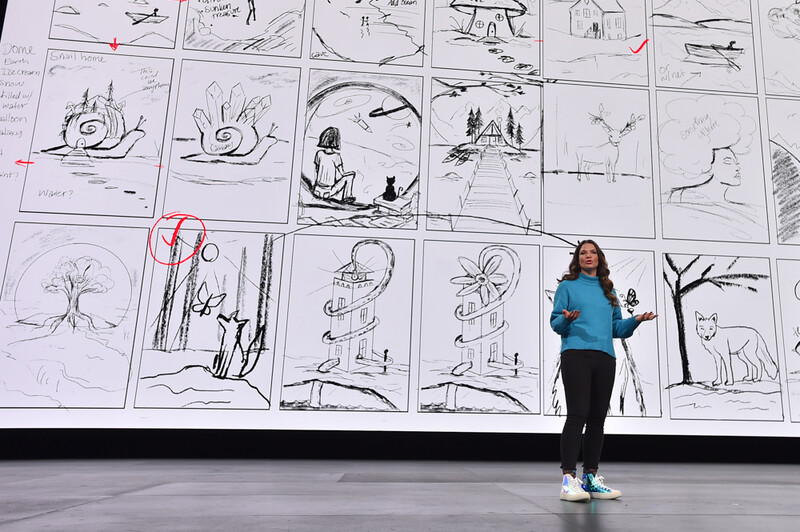

Anna is a prolific creator using Photoshop for 20-plus years. She is a Photoshop expert but she is also on this journey of understanding what Firefly can enable and folding it into her creative process. One thing she highlighted yesterday was she used Firefly to help ideate at the start of the whole process of deciding what she was going to create. She was just sketching ideas as simple drawings and then she used Firefly to turn those sketches into something a little bit more visual to have feedback and conversation with her team. She used Firefly image generation to bootstrap the ideation process. And then when she moved beyond the ideation process to the actual creative content creation, she used the Firefly-powered capabilities in Photoshop, specifically generative fill and generative expand, coupled with everything else in Photoshop, compositing, selection, layers, blurring, masking, to create a final output that was actually an amalgamation of many photos, some generated, some real-life photographs. The final result was a beautiful new creation.

It is interesting that she didn’t fully understand the technology at the point that she started using it.

Right. She's exploring and seeing how Firefly can assist her creative process, and we're seeing that time and time again with the feedback we're getting through these public betas where people are. They are learning new ways of using this technology to their benefit.

6 Comments

What a load of pure corporate BS.

Their "vision" is to sell as much Generative AI as they can, as fast ast they can, before a judge finally understands how it really works and shuts the whole thing down.

Adobe doesn't give a moldy fig about "creativity".

The "vision" of every single company on this planet is to sell. It's my vision. And possibly yours as well. That said, there is nothing inherently wrong with that. Tesla wants to sell cars. Period. If they can make a sales pitch about their cars being better for the environment or that their cars are safer or whatever, there is nothing wrong with that. The fact that their main goal is to make a lot of money doesn't mean that somewhere in the process they don't actually believe their talking points. Whenever I pick up my camera for a client, my number one goal is to make money. Still, I care about what I'm doing. I'm passionate about photography and I truly want people to have the photographs I am taking. So I don't see any reason to single out Adobe for wanting to sell something unless we are going to call out every single company, large or small doing business with the public.

Also, one of the interesting things about the Adobe MAX event was when they had actual engineers or designers (not sure exactly what they should be called) give demos of software features that they are working on. These presentations were very different from the ones done by social media influencers and such on the previous day. The earlier presentations were expertly delivered, funny, creative and very memorable. The presentations by the actual engineers who were working to create features in these programs were no where near as fluid. These people aren't used to being onstage and their delivery wasn't perfect. They didn't have funny jokes ready to go. They didn't have a back and forth with the audience. But you could clearly see they were passionate about the software they were working on. It's hard to fake that. And non professional presenters like these, would never be able to pull. it off. Again, everyone one of those engineers was working for Adobe to make money. But that doesn't mean they don't actually care about what they are doing.

I think my point didn't really get across.

I'm a former software engineer, I've worked for big cimpanies, so I'm quite familiar with the need to make money, and have no issues with Adobe on that score.

My comment was in reference to their professed attitude to "generative AI", which I find disingenuous. As a photographer (and software engineer) I see this so-called "AI" as a well-obfuscated way to create mashups of the work of actual human artists and creatives - without regard to copyright - and I think some big lawsuits (already in progress) will eventually make that clear. Adobe's engineers no doubt understand this quite well.

So I think it's a bit phony for Adobe to tell us how their boundless support for creatives is what's behind the rapid addition of generative AI to their products.

No, I partly disagree. It's now mostly about shareholders and if sinking a company to suck the profit and go to the next one, they see it as fair game.

I don't know about Adobe but I think the development of generative AI is going to be determined by where people see the images rather than how the images are made. I live in Las Vegas and there's a buzz in town about two new venues that are filled with generated imagery. The most popular one is The Sphere and the other one is a South Korean installation called "Eternal Nature" at Arte Museum Las Vegas. People in this town don't usually get impressed very often but many have been excited by the potential in these for interactive and performative public generated imagery. The way to think about it is to imagine a generative visual artist creating imagery in real time for a crowd the way that DJs mix music at events like EDC.

Until now, digital photography has mostly been tied to social media platforms and it's really easy for anybody to shoot for small screens. But a lot of AI generated imagery in the future will probably be for higher resolution screens and HDR monitors that show every flaw.. For example, the screen in The Sphere is 16K by 16K and there are few photographers that can shoot images right now that would look good on that type of display. That's an extreme example, but a lot of what puts photographers out of business in the future might have to do with the new screens and avenues that people will be using to view images. Most photographs that have been shot for social media are going to look pathetic next to generated AI images at extremely high resolution.

So you're saying the endless race for more megapixels is going to continue and we are going to forever be upgrading cameras year after year...? *Sigh* Really not looking forward to that ;)

(BTW, Adobe also showed some really impressive upscaling technology for video at the MAX conference so it is possible that one day we won't have to increase our megapixels in order to create 16k content).