As many of you know, Lee and I recently moved to Puerto Rico, and with that move, we are having to completely redesign our new studio space. In today's video, we tackle our in-home network and wireless Internet connection. Surely the limitations in Puerto Rico will prove to give us trouble... or will it?

If you remember a few years ago, we decided to upgrade the network in our photo and video studio to include 10GbE connectivity. Because we often need multiple users to be able to pull large files from our Synology NAS all at the same time, we wanted to see if switching from a common 1 GbE system to a much faster 10 GbE system would actually make a difference when editing in Adobe Premiere. As you can see in the older article titled “How to Upgrade Your Network to 10 Gb/s and Speed Up Your Workflow,” not only were we able to achieve nearly 10 times the transfer speeds, but we were also able to greatly improve the overall editing experience for everyone in the Fstoppers office.

Since we have moved out of the Fstoppers office in Charleston, not only do we need a Network Attached Storage that is capable of transferring our files at this higher bandwidth, we also need to build an entire 10 GbE home network from scratch. Luckily the house we are renting already has Cat 5e cable installed throughout the entire building, but trying to understand the home's existing switches and installation wasn't easy.

The Home Network

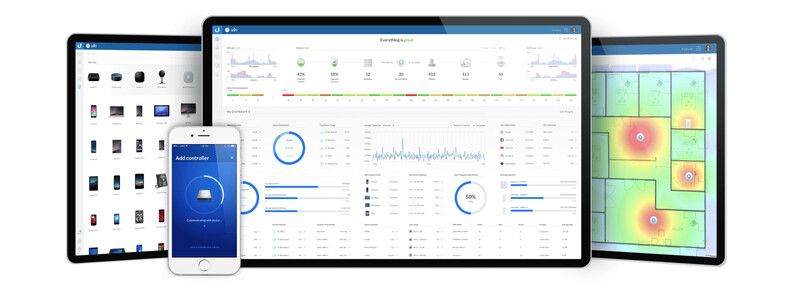

Before we even considered getting Wi-Fi and our NAS installed, we first had to setup the main 10 GbE network in the home. Over the years we have bought our fair share of switches, routers, and all-in-one wireless routers from a variety of popular manufactures. We even at one point switched over to Apple's Airport Extreme Base Station in the quest to find the most powerful yet easy to use hardware. Back in 2016 I decided to get rid of nearly everything and build a completely new system using only components from the highly recommend company Ubiquiti. Ubiquiti offers amazing enterprise-grade products that can be used for big commercial clients but they are still easy enough to setup for less technical customers like me. I first noticed Ubiquiti's UniFi systems in major hotels and restaurants that required multiple access points. I figured if their systems were good enough for these types of applications, they would certainly be perfect for Fstoppers.

Unlike other basic "all-in-one" systems I have used, the UniFi system comes in individual components that allow you to customize your network to fit your exact needs. In some ways, an all-in-one wireless router is sort of like a boombox whereas a UniFi system is like a highly-tailored home stereo made up of your favorite individual components. That being said, I can easily wire up a home stereo in my sleep whereas I almost always run into issues when installing anything network related. Luckily the UniFi Cloud Key made my life easy, but more on that later.

The main three components we wound up using were the UniFi Security Gateway Pro 4, three UniFi US-XG - 6POE switches, and Ubiquiti's newest UniFi Cloud Key Gen 2 Plus. The gateway offers advanced routing and a reliable firewall, the switches give us full 10 GbE POE connections in the more common RJ45 jacks, and the Cloud Key not only makes setting up and monitoring the whole system extremely easy but it also has a 1 TB hard drive for storing video from the UniFi G3 security cameras (which we are installing at the moment).

If you are like me and are a bit overwhelmed by all the options Ubiquiti offers, or if you just want to know how all of this stuff works together, definitely check out the video below. Tony Hunt has an amazingly thorough video showing what each piece of equipment does and why having a modular system is better than your typical all-in-one wireless router.

Once I wired everything together, I found out that although the entire house had fairly modern cat 5e cable, all of these cables were running through an older TP-Link switch that was only 10/100 Mbps. Without buying two new 24-port switches, we decided to keep these switches in place for 90 percent of the house and only tap into very specific connections for our newer 10 GbE connections. All in all, we currently have about eight connections in the house running at 10 GbE while the others are still connected at 100 Mbps.

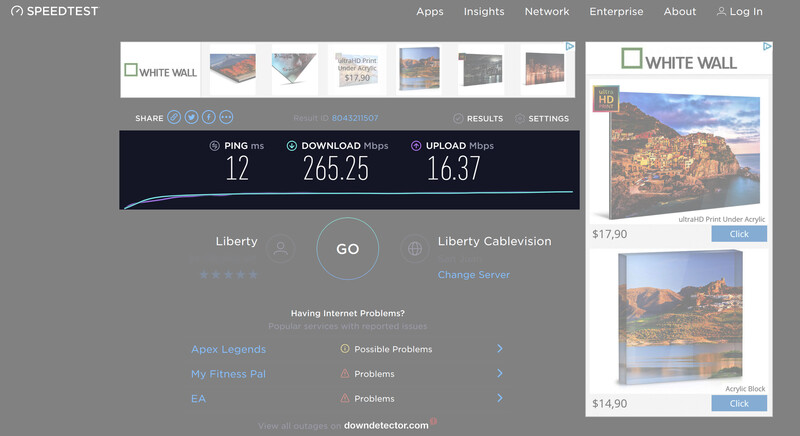

As you can see from the speed test below, all of the wired computers and devices are getting around 250 Mbps download and between 15 and 26 Mbps upload (and our Liberty Internet bill is around $100 for those curious).

The Wireless Network

Once we had the main brains of the server up and running, it was time to get high-speed Wi-Fi throughout the house. As I mentioned in the video, most of the homes in Puerto Rico are built with poured concrete which offers a much more sturdy construction compared to wood-framed homes found in the continental U.S. However, these concrete structures make it near impossible to get a strong Wi-Fi signal if you are far from your main wireless router. This is where multiple access points come to the rescue.

For this system, we decided to place a UniFi nanoHD Access Point (AP) on each floor of the building so that we could have the strongest Wi-Fi signal possible. Unlike other systems that rely on extending existing networks, assigning new IPs that much be handed off, or building complicated mesh networks that depend on Wi-Fi signals in order to mesh, the UniFi system creates a truly seamless integration. Because each access point is hardwired into the network, each AP has the strongest signal possible. These small nanoHD APs allow smooth handoff between devices as you move from floor to floor and allow Wi-Fi heavy applications like FaceTime and video streaming to continue without interruption.

Back in our office in Charleston we had three of the older UniFi APs and they were able to give the entire property fast Internet. Because of the size of this house and the concrete, we opted for four APs which has allowed us to have fast Wi-Fi anywhere we connect. Also because our home's 5e cable is capable of supplying each AP with power over Ethernet (POE), we didn't have to run extra power plugs to each unit. This allowed for a much cleaner install while keeping all our ac outlets free for other devices.

I'm not sure what the limits are on Wi-Fi speeds especially when connected with an iPhone, but as you can see in the video, I am reliably able to get around 150–250 Mbps download speeds and 15–25 Mbps upload speeds straight from my cell phone. Even though I was paying for high-speed 250 Mbps Internet with Xfinity in Charleston, I don't think my cell phone ever was able to get speeds as fast as we are getting down here in Puerto Rico. In every way, our Internet experience here is better than it was in the states. As a side note, even our AT&T service is faster whereas our cell service in the U.S. always seemed to be throttled or maybe the bandwidth was just oversaturated in most major cities.

The Network Attached Storage

Now that we got fast wired and wireless Internet running throughout the new studio, we needed a way to store all our files locally. Lee and I have both been using Synology's NAS boxes for years now, and just like the Unifi system, these NAS devices are super reliable and extremely easy to use.

When we travel, we often bring along the small Synology DS416slim. We love this NAS because it's small, has two RJ45 jacks which allows you to directly connect up to two computers, and it holds four SSDs for extremely quick read and write speeds. Back in 2017, we upgraded our beloved Synology DS1817+ NAS to our first 10 GbE rack NAS, the Synology rs18017xs+ (which is $6,000). We did a full article about that process if you want to check it out.

With this new build, the kind folks over at Synology sent us their new DS1819+ DiskStation. Just like the other NAS in this series, the DS1819+ comes with four 1GbE RJ45 connections that can be bonded into one 4 GbE connection. While you aren't going to get a true 4 Gbps with this native configuration, you do gain some extra bandwidth and redundancy if one of the connections stops working. Since we were looking for massive speed to help pull files into multiple computers running Adobe Premiere, Photoshop, and Lightroom, we opted to upgrade our DS1819+ with the Synology E10G18-T2 Dual 10 GB PCI card. This PCI card gives you two 10 GbE RJ45 jacks which offers, in theory, ten times the speed of a single 1 GbE connection.

With all NAS enclosures, you still have to install your array of RAID hard drives. We have a great relationship with Seagate, and Lee somehow was able to get eight brand new Seagate 14TB IronWolf Pro HDDs sent to our new office. We both didn't even know hard drives had even gotten up to 14 TB yet, and shockingly, with this configuration, our much smaller and quieter DS1819+ NAS actually has more storage space than our rs18017xs+ that we left back in Charleston.

Once everything was connected and setup, the final test was to see how fast we could transfer files to and from our new Synology NAS through our fast 10 GbE connection. Many people warned us that our computers would never recognize a 10 GbE connection through Cat 5e cable but we were pleasantly surprised to see that each of our 10 GbE capable desktops was in fact registering the full connection. With a few speed tests, we found that we were regularly able to obtain write speeds around 300–450 Mbps and read speeds of up to 700 Mbps! Somehow, even with similar equipment, our new server in Puerto Rico is actually faster than our rack system back in Charleston.

Conclusion

Overall, we could not be happier with our new home network. Having converted over to a 10 GbE network a few years ago, we knew we had to build a similar system in our new studio space. Building a 10 GbE system is by no means cheap and not every photographer or videographer will immediately notice the difference between a standard 1 GbE system and a 10 GbE system. However, if you are like us and have a team of editors that need access to the same files in the same location, having a network with even a few 10 GbE connections can make a big difference. The nice thing with the Synology NAS is that you can plug up to two computers directly into their 10 GbE PCI slot and immediately experience lightning fast access to your files without the need of a separate 10 GbE switch. But if you want multiple users or if you are simply future-proofing your own house or studio, I highly recommend building a simple, yet powerful 10 GbE system from Ubiquiti.

If you have any questions about our new network build, how we use our NAS system with our individual workstations, or even other topics you would like us to cover as we transition into our new studio space, please feel free to leave your comments below.

18 Comments

You guys need to consider creating an aggregate for the NAS so that multiple host computers can pull data from the NAS at close to equal speeds of a single connection. The fact that you got nearly 700 MB/sec means you need to tune your TCP stack and check into transmit and receive buffers on the NIC drivers for the cards that you are using.

Care to point us to something that can help us understand this? Are you saying 700 MB/sec isn't a good benchmark for this system?

Patrick you don't start at 700, you build up to it. That means there is room for improvement. The document I am enclosing is an example of the things I would be looking at doing to improve speed: https://www.cinevate.com/confessions-of-a-10-gbe-network-newbie-part-5-…

You also might want to look at tuning the NAS. I use FreeNAS at home and after tuning the OS and my network card on my PC I get 100 MB/sec writing to the NAS which uses Seagate IronWolf 5,900 RPM drives from my machine using a Gigabit network. If your NAS is using 7,200 RPM drives, you should be getting better results. The Cat5E cabling could be a problem and you might have to upgrade to Cat6. I would look at doing this for the clients that require it.

Heya Patrick. We have exactly the same NAS and similar drives (slightly older) and we get around 2,500 Mbps read and write through our 10 GbE connection. It seems like you might be bottlenecking on bus speeds or maybe the network is really 1 GbE.

Well a 1Gb connection shouldn't go past 120Mbps right? I understand the bottleneck could be the Cat 5e cables in the walls, but if it isn't that, where would we look to see what the next problem could be?

Oh ok I follow you. You're talking megabytes per second and I was talking megabits. I'm an old network guy so I always read MBps as bytes and Mbps as bits. Anyway yeah you're good, false alarm.

BTW thanks for the article, the timing is perfect because we're moving to MacBooks and I need to extend our 10 GbE network to a few more computers, so I need switches and stuff and I'll just do exactly what you've told me.

Right Patrick. Gigabit Ethernet is good to about 120 MB/sec. I would start with tuning the NIC on one of your client machines and test. Is the NAS maxed out memory/SSD wise? If not, there is another area for possible improvement. The software on the NAS can help you pinpoint bottlenecks on the device.

You guys are doing good, I’ll go for a gathering and glass of scotch when you have free time =)

a gathering? Does biking to the beach and having an old fashioned every night count?

That can work, nothing wrong with bikes and beach

There are so many things wrong here I’m not even sure where to start. First off, cat 5e is only 1Gb rated cable. Synology NAS (barring a couple that are 10Gb capable) are generally quite slow. Even aggregated you’ll be hard pressed to get any real speed out of them, particularly if your network is limited to 1Gb speeds due to cabling. As much as I like Unifi and all the Ubiquiti stuff their AP are also relatively slow usually capping out around 400-500 Mbps on the client side. They’re Tx/Rx are rated much faster but they don’t perform that way in the real world, unless you’re maybe standing line of sight 6’ away. And most importantly, make sure you talk about network technologies using the proper capitalization. They’re is a GIANT difference between 10GB and 10Gb. Bytes and bits are not interchangeable.

In short your network is quite slow and not taking any advantage of devices equipped with 10Gb capability. Write speeds of 300-450Mbps is slooooow. Like less than half the speed of a 5400rpm spinning notebook hard drive from 2001 levels of slow. Via wired Ethernet over a 1Gb connection you should be able to get speeds of 700Mbps both directions to a Synology, or faster over an aggregated connection.

A true 10Gb network and network devices should be able to get 5000Mbps or more to a proper 10Gb NAS. That’s 500 MB/sec throughput similar to copying to a modern SSD like a Samsung T5. The Synology you have should be good for that as aggregated is should be able to provide max speeds in the 400MB/sec area or more.

Thanks for the comment. I'll update the GB in this article to reflect Gb.

As for your statement on the cabling, with Cat 5e or Cat 6e plugged into a 1Gb network adapter, we have only ever been able to get 120Mbps transfer speeds. My understanding is this is the max of a 1Gb connection so that makes sense. Keep in mind, this is with different machines, different locations, different builds, etc etc....120Mbps has always been the cap regardless of the cable.

If we are now getting 400 - 700Mbps with our 10Gb ports, doesn't that prove that our Cat 5e cable is pushing faster data than what the exact same connection pushes when connected to 1Gb ports? Or are you saying the limit of 1Gb ports should be 1000Mbps? If so, I've never been able to get those speeds on a 1Gb connection even when plugged directly into a NAS.

We have some Cat 6e cabling here that we could run outside and manually plug into a computer and the NAS. I might do that test just to see if the cabling makes any difference.

I guess in the end, my biggest take away is that we went from 100Mbps to now 500-700Mbps. This has never been achievable for us with anything other than a 10Gb connection even with the newest cables. You might always be chasing after faster and more optimized speeds but at the end of the day, this is 7x faster than what we had before on a single 1Gb connection.

There’s something else going on. Couldn’t tell you what precisely, but likely cable or switch related.

It’s worth noting again that Synology are generally not very fast and do weird speed throttling things sometimes. That said, I regularly get around 35MB/sec via WiFi to my Synology. When I plug my MBP into a gigabit Ethernet adapter I get between 75-80MB/sec copying to the Synology. When I use a 10Gb adapter to a fast SSD raid I get upwards of 400-500MB/sec, but with a super fast array you could actually attain 1000MB/sec+ over a single 10Gb link.

When you’re talking about achieving 35-75MB/sec throughput that’s not any sort of achievement, and the potential of 10Gb is being wasted. You need to look at your cabling, all your potential bottle necks, etc. Something is choking your performance.

Also note that your speedtest.net tests are really only testing your internet connection. It’s from your computer, through your LAN infrastructure, to the internet. It is unlikely to indicate anything about your local network since even the slowest LAN devices should easily be able to do 200/20Mbps. For reference I get around 400-500Mbps up/down via WiFi using a Unifi AP Pro and a 13” MBP over 1Gb symmetrical fiber. When I plug in via Ethernet using a 1Gb adapter I get 930-1000Mbps symmetrical.

One other thing, different network protocols perform differently speed wise. Particularly in regards to Synology. I often get 10-20% faster speeds copying to my Synology if I use SMB vs AFP.

And, Cat 5e is only rated for 1Gb and is surely doing you a major disservice. You really want Cat 6a or greater for your whole network if you want to attain 10Gb speeds.

Other things of note: You need every device between your computer and Synology to be 10Gb compliant. If it’s not you will immediately be throttled to the limits of that device (or cable). Also your computer hard drive needs to be as fast as your 10Gb connection if you want to take advantage of it—say for instance a new MBP or an iMac Pro (bench over 2,500MB/sec!). Anyways, you should be seeing speeds of ~400-600MB/sec (4000Mbps +\-) with a 10Gb equipped Synology that has fast drives in it coupled to your 10Gb equipped computer (as long as it has a drive capable of those speeds)—when copying larger contiguous files.

Good luck with your project.

I've read all your posts and one thing that I think you might be missing is that we are getting 400-600 MB/sec transfer and write speeds. That's what the speed test on Lee's computer showed in the video. Maybe it was lost in the bits vs bytes nomenclature but on windows it shows MB/s and our files are infact transferring at 400-700 MB/s.

Also, I think our cable modem is the bottleneck for our internet. Do they make 10GB modems for internet and can coaxal from the street support 10GB? Either way, we are only paying for 250 down and 25 up so as long as we are getting that there isn't much more for us to do on the internet speed side of things.

It's so satisfying when everything comes together and works on the first try. Cheers.

Patrick Hall Im building a house, and am considering many of the products you're using in this build. Could you comment on the noise of the rack mounted gateway and switch etc? The rack will end up in the basement, but we plan on finishing the basement, so im concerned about noise. Maybe a quick ohone vid or something? Thanks man.