Here comes the shock: you can get extremely sharp photos with cheap gear. Let’s have a look at what sharpness is and how we can improve it.

What Is Sharpness?

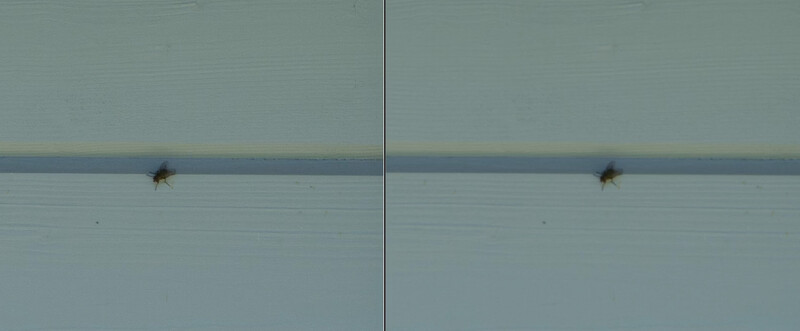

We all want to get sharp photographs. But what is sharpness, actually? Let’s forget about all the compositional methods and light for a moment. Let’s have a look at the image below, which is just a quick snapshot of a fly. The left image is sharp, the right one is blurry. But what is the difference? Moving the slider to the right will show you the original size of the image. Moving it to the left instead will show you what happens when we zoom in very close with Photoshop. Interesting are the edges of the fly, the wings and the feet, in this case. While the pixels in the sharp version have more contrast with each other, the pixels of the blurry version seem to have got inherit information from their neighbor pixels, which leads to an unsharp appearance.

So, when we break it down, sharpness is not more than just contrast between pixels. Let's have a look at which methods we can use to get sharper photos.

A Good Tripod Is More Important Than a Camera

A sentence that is well known for many years already, and it is still true. When our camera moves while we expose, the images is blurred. I am not talking about long exposure over multiple seconds, as everyone is careful with long exposure shots. In my experience, most troubles with shaky images occur at shutter speeds between 1/50 and 1/2 of a second. A good tripod is useful here, and using a remote release or at least a two-second timer avoids shakes due to touching our gear.

Motion Blur

Same as it is important that our camera is sturdy, it is also important that the elements in our composition don’t move, unless we want to use this to support the story our image should tell. Therefore, we need to choose a short enough shutter speed, which depends on the speed of the element’s movement. The faster an element moves, the shorter we have to go with the shutter speed to avoid motion blur.

When we work in a studio or situation with enough light and slow or immobile elements, this will not be an issue, but if we want to photograph woodland scenes, for instance, without artificial light sources, with foliage that moves in the wind, we will have to increase the ISO to get the shutter speed short enough, or we open the aperture to get more light onto our sensor.

Aperture: Friend or Enemy?

Opening the aperture a bit more is a good tip to get rid of unwanted motion blur, as we get more light on the sensor. But there are two more things we should consider about aperture to get pin-sharp photographs. First of all, lenses are not equally sharp at each aperture. So, if we open the aperture too much, the images could become softer. What’s happening here is that the optical elements inside your lens simply mix the information between neighboring pixels. So, we lose sharpness. This can be desirable, of course, especially when you want to get soft bokeh, to isolate a subject from the backdrop.

On the other hand, when we close the aperture too much, we get diffraction, which makes the entire photo look softer. The aperture is a very important stylistic instrument. It allows us to define the depth of field, or in other words: what range of your composition is sharp.

Sharp Light

Sharp light? There doesn’t exist something like sharp light, of course. But let’s remember what sharpness is: it is the contrast between the pixels. And how can we increase contrast? Light is our friend here. Especially when the light comes from the side, it will make all the tiny elements visible, all the structures and textures in our scene. The image starts to look sharper, just by using nice sidelight.

Focus

I’m sure it will not surprise you when I tell you that you get blurry images when they are out of focus. But when you have read this article attentively, I’m sure you also know why this is the case. Out of focus simply doesn’t mean more than the focal point is too far in front or too far behind the sensor. The pixels get information from their neighboring pixels. This is why it is really, really important that you always try to nail the focus.

Many more tips and more details about how to get razor-sharp images are revealed in the above-linked video.

12 Comments

I recently learned the trick of using spikes on the foot of the tripod to truly anchor it to the ground over soft surfaces. Even on hard surfaces it allows vibrations to dye down faster. It really works. I do infrared photography with a filter ( non-converted camera). At ISO 100 I usually need 45 second exposures. It is shocking to see the tree trunk tack sharp while the clouds and leaves are not.

IMO Sharpness with any lens starts in the camera. This tests the camera in picture control sub-menus for sharpening, contrast, brightness, saturation, and hue. Testing each setting (+/-/A) and comparing images will show you what you can get from your camera body. Then in PS, I compare images at 100% & 200% to find the best setting. It saves a lot of work post-processing as your image is sharp when you import.

Yes, there are a lot of images that have to be shot and compared. The end result is worth the work. Then using your concepts just adds the one other layer to making great images.

Just to clarify for new/less experienced photographers... The comment above does not apply to anyone who shoots RAW. These settings make no difference there. This is only for folks who shoot in-camera jpegs (which is almost always ill advised).

What you are saying that in RAW format, all of the internal camera settings under picture management are bypassed? I never shoot in .jpeg/jpg,..always RAW. Can you reference me an article/tutorial on these settings? Perhaps I missed a lesson somewhere.

I dont have a reference for you, but except for Long Exposure NR, settings like noise reduction, sharpness or picture styles don't affect the RAW files. They affect what you see in the preview on the back of the Camera though.

I don't understand this statement: "What’s happening here is that the optical elements inside your lens simply mix the information between neighboring pixels."

The way it's written seems to imply that the optical elements have pixels (which, obviously, they do not). That being said, I'd truly like to understand what the author actually meant here. Does anyone here have the technical background to explain?

I believe what he means is that, in the ideal optical system, every point in the camera field of view will map uniquely and completely to one pixel on the sensor. In actual optical systems, if the focus is not perfect, the light rays from a point in space will be spread out radially from the center pixel, this is known as the "point spread function (PSF)" in optics. As the optical system gets further from "ideal" focus, the PSF can grow large enough that it starts to illuminate neighboring pixels on the sensor. Likewise, as the PSF grows, the pixel of interest will start to be illuminated by rays that are trying to be focused on the neighboring pixels, so the "central" pixel is getting photons from all of the neighboring pixels' points in object space. I think this is what he means by saying the optical elements mix information between neighboring pixels. Simplified illustration is below.

Could we say this is what we call the "circle of confusion"?

This is quoted from the MasterClass website: "In photography, the circle of confusion (CoC) describes a point of light directed onto a camera’s focal plane by the lens. Depending on the camera’s aperture, depth of focus, and field of view, the diameter of this dot of light might be extremely narrow when it hits the camera sensor, or it might be wider. The wider the diameter of the circle of confusion, the blurrier the dot appears to the human eye. The circle of least confusion is the smallest blur spot a given camera-lens can produce. The circle of least confusion varies from lens to lens.

It is not quite the same, but it is similar. The above explanation and diagrams were for the case that the author described in which the optics are at best achievable focus, but the focus is not as sharp as one might desire. In his example, it was because the lens was at an aperture that did not provide as sharp a focus as other apertures Thus, the PSF was larger.

To tie it to your excerpt from the MasterClass, the PSF is analogous to the Circle of Least Confusion (CoLC), i.e. the best that the optic can achieve at the plane of focus.

The CoC is illustrated by the diagrams below. When the optics is focused "optimally", only objects in a single plane will be optimally sharp because the rays will converge exactly on the sensor plane, as shown by the top diagram. For objects nearer or further from the plane of focus, the rays will not converge at the sensor plane, but in front or behind the sensor plane, as illustrated in the second and third diagrams. The effect is that they will be slightly out of focus. The difference in this case is that this degradation of focus is expected, even for perfect optics. The PSF of the optics can still be very tight, but only for objects at the plane of focus.

The CoC is defined by the amount of degradation for objects off the plane of focus that can be tolerated to be considered acceptably sharp. There is no single way to define it. In scientific applications, such as radiometry equipment, detection systems, etc, the CoC is usually defined based upon the size of the pixels. Only objects that do not "spill over" into neighboring pixels would be considered to be acceptably sharp. In photography, the definition has historically been derived based upon visual acuity of the human eye, for images viewed at a "normal" viewing distance. Hence, the longstanding definition of 1/1500 of the diagonal dimension of the film frame or sensor dimension.

Once the CoC is determined, the DOF can be calculated for the optical system, as is illustrated in the diagrams.

For 35 mm film, or full frame digital, this calculates to approximately 0.029 mm. This is the value of CoC that is used in generating the Hyperfocal Distance and DOF values in applications such as PhotoPills. For non-FF cameras, the CoC is scaled by dividing by the crop factor. APS-C with a 1.5 crop uses 0.019 mm for the CoC.

To sum up, the CoLC or PSF is the best focus that can be achieved at the plane of focus, the CoC is the amount of degradation from the best focus for objects fore or aft of the plane of focus that can still be deemed to be acceptably sharp.

Hope this helps and that I didn't confuse you.

This is in my opinion why we run a lens test from wide open to the max. Somewhere around 2-3 stops from wide open will the lens produce the sharpest image. You have to look all the way out to the edges.

I bought a used lens and ran a test on it and found that 2 1/2 stops was the sharpest. Even then it was not all that sharp as I found out the lens was a film camera. The resolution on a FF 25 mpx was not great. I put it on an older FF with a 12 mpx and it resolved decent enough to be useable. Thank you for your expertise. :)

You're very welcome.

I would also add that better lenses have coatings that help prevent flare. Flare even when not overly apparent can degrade sharpness.