At this year’s Adobe Max conference for creative and design professionals in Los Angeles, CA, the focus was on using AI technology to enhance creativity. As Shantanu Narayen, CEO of Adobe Systems, stated, people are creating more than ever before, and it is Adobe’s intention to use AI to allow creators to become both more creative and more productive.

Although Firefly’s generative AI abilities have been in existence for under one year, users have already used the tech to create over three billion images, which Adobe states is the fastest that any of their technology has ever been adopted by so many users. One concern users have had with using AI-generated additions is the source of the content that is being added. Some AI platforms have produced images that clearly show signs of a watermark being removed, indicating that the images being used to train AI and to output new imagery were used without permission. Adobe assures users that no copyrights will be infringed upon while using Firefly technology in their creations. The goal is to integrate AI technology seamlessly across Adobe’s apps. AI can be thought of as one ingredient in the creative process rather than a complete solution. It is Adobe’s contention that AI will never replace human ingenuity.

Adobe’s latest updates are built on the Firefly platform, allowing AI-assisted content creation in design, photography, and videography. Adobe promises that Firefly is optimized for native integration into tools so that the user can use the feature as easily as they have used pre-existing features. Firefly is safe for commercial use because Adobe has secured the rights to any pre-existing images that are incorporated into a user’s creations. The program respects “do not train” meta tags that creators can use to opt out of having their content used for training AI models. One of the most common uses for AI-generated content is to expand an image beyond the scene that was originally created. Firefly’s addition to the image matches the perspective, style, and lighting of the original image making it virtually impossible to tell what was originally captured by the camera and what elements were added through AI tech. AI additions to an image are added on a new layer so that the user can manipulate the AI additions at will.

David Wadhwani, Chief Business Officer of Digital Media at Adobe, pointed out that when Photoshop was first released in the early 90s, not everyone shared enthusiasm for digital art. Many creators worried about their careers and craft and wondered if there was a place for digital art in a world where art was printed, drawn, or painted. These same questions are arising today about the place of AI-enhanced creations in a world where all previous art has been fully created in the human mind. We need not worry, David suggests. One could make the case that painters actually began producing their most interesting work after the advent of photography. Once photography made is possible to create realistic depictions of the world around us, painters were free to pursue more abstract depictions.

In today’s world where many of us spend hours each day consuming photographs and videos, the demand for content isn’t likely to fade anytime soon. Demand for fresh content has doubled in the past few years and is expected to increase five-fold in the next few years. AI technology can help the average person bring their vision to life in a way that hasn’t been possible previously.

Below are a few examples of how Adobe is continuing to integrate AI technology across its software.

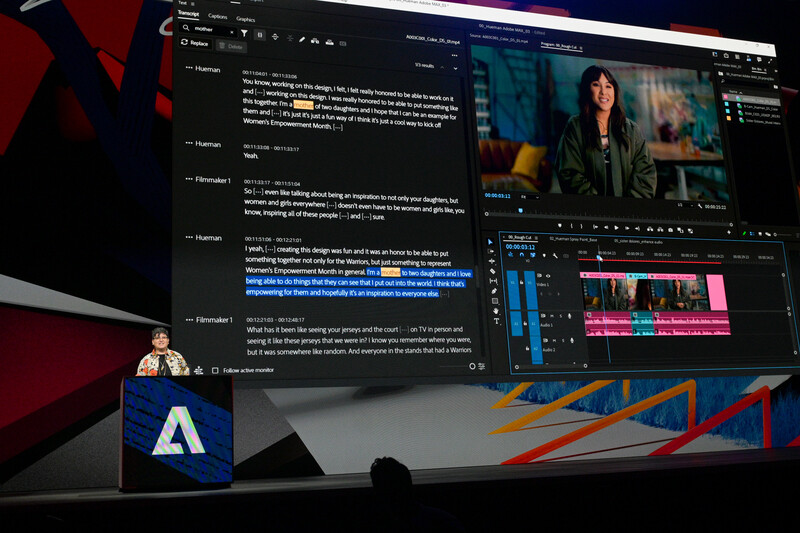

Premiere Pro

Premiere Pro has introduced text-based editing. The program will automatically transcribe speech. This allows the user to read text rather than watch video to find the relevant clips needed in the final edit. The user can select the text just as they would in a document. This text can be copy/pasted as it would be in a document, resulting in the video clip being edited.

AI technology will also allow Premiere to locate filler words, like “um,” that most speakers utter when thinking what they will say next. Once located, all of these fillers can be deleted as easily as one might delete them from text. Once removed, from the text, they are removed from the clip and the speaker’s words can flow more smoothly.

Premiere will also feature enhanced audio editing that allows for unwanted noise from wind, background music, and traffic to be removed instantly using the Essential Sound panel. The tool is capable of isolating the voice. There is also a slider that allows the editor to add back in some of the natural background sounds that were present in the original recording.

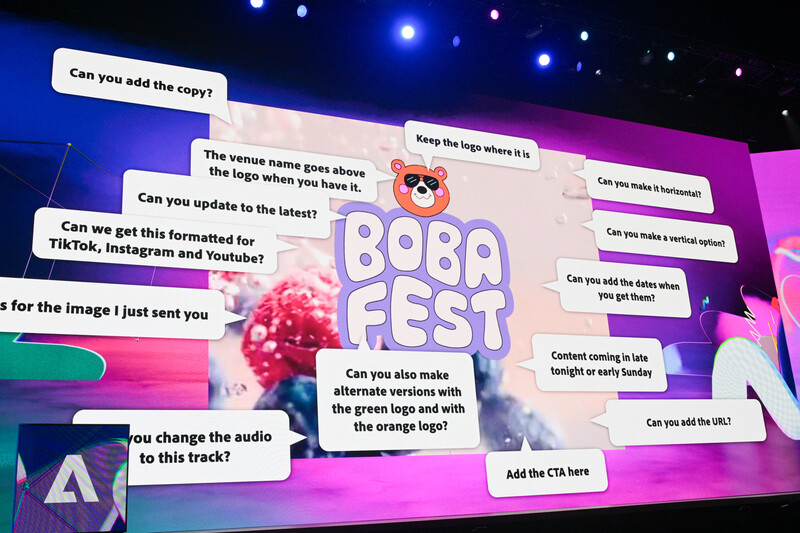

Express

Express is a platform that allows users to design flyers, banners, cards, invitations, signs, and other types of printed information graphics. AI is incorporated using a “Tell us what image you want to create”, prompt. In the past, a user might have input, “Birthday party flyer for a 5-year-old.” Express will allow for more specific instruction such as, “Birthday flyer for a cat named Scarlet wearing a police officer’s uniform.” The program will create a variety of templates in different styles and with different text that suits this prompt. Once a design has been selected from the original offerings, the user can generate variations of this design until the creator’s vision is refined.

Designs in Express can be created using templates provided by the program. Once the design has been created, the user can experiment with animating specific elements through effects such as flashing, flickering, looping, and spinning. These animation effects can be controlled by way of sliders. This allows the user to take a flyer that was printed and then animate it for use on Instagram or TikTok.

Stock images can be added or incorporated into your original design or the user can design incorporating their own images. Individual elements can be edited in Photoshop or Illustrator as while they are being used by Express. Updates made outside Express are changed in real-time in Express.

To preserve the original creator’s vision, specific elements of a design can be locked so that additional creators cannot modify these elements. This is useful if the creator wants to retain certain colors or fonts in their design. The saved elements can be locked and saved as a template so that future projects can showcase the look of the brand. Once the template has been created it can be shared with other people who can make adjustments while still staying within the parameters of the original design. Templates are also built into the program for easy output and posting to Instagram, Facebook, and TikTok. Design elements are automatically moved to format the design in a visually appealing way for each specific platform. Express also includes a content calendar that allows you to schedule the time and date that posts will be made to these platforms.

Firefly

The latest updates to Firefly allow the user to indicate elements to “exclude from image.” Users can also indicate the field of view in which the AI image should appear. There is also an enhanced ability to control the style and texture of an image.

To ensure that AI images are as close to the user’s vision as possible, a user can upload a reference image indicating how the final image created by Firefly should look. The content of the uploaded image does not need to match that of the AI-prompted image. If the user were utilizing AI to create a portrait, they could upload an image of a brick wall and use Generative Match to indicate the color palette desired in the final image. In keeping with Adobe’s promise to not infringe upon creator’s rights the program will ask the user to confirm they have the rights to use the uploaded image.

While today’s updates to Adobe’s Creative Cloud suite of programs are indeed impressive, the company promises that the next iteration will be equally impressive with improved capabilities regarding audio, video, and 3D rendering.

2 Comments

In the announcement, it was said that Gen 2 will have 4 times the resolution so if its max right now is 1024 x 1024 then it will be 4 times that? If so take my money. This Gen 2 which hopefully will be coming to Photoshop beta soon is already 100 times better than Gen 1.

My understanding was that everything announced is averrable in the program right now. I haven't had time to independently verify that, however.