MIT researchers have found a means of recovering "lost details" from images, and create clear versions of motion-blurred parts in videos. Some experts are suggesting the process could one day even convert X-rays into CT scans.

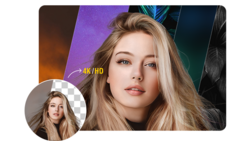

In this latest breakthrough, the team "trained" a convolutional neural network by feeding it pairs of low-quality images along with high-quality counterparts. This was a way of allowing it to learn how the high-quality images can produce blurry, barely visible footage. It then analyzes them to figure out what exactly it was in the video that could've been responsible for the blur. Next, it forges new images that are a combination of data from both the high and low res parts of the video.

The long term goal is to one day be able to use the same process to turn 2D images 3D. The implications could extend to the medical field, in particular aiding poorer countries who struggle to afford the latest technologies.

Guha Balakrishna, the research paper's lead author, said:

If we can convert X-rays to CT scans, that would be somewhat game-changing. You could just take an X-ray and push it through our algorithm and see all the lost information.

In relation to photography, the model may be able to "de-blur" an image, creating a much sharper still, and eradicating blurry elements.

Read more at Engadget.

1 Comment

New life for my old blurry concert pictures from the 70s...