Computational photography is quickly becoming one of the leading threads for the future of our industry. Whether we realize it or not, it is already deeply integrated into our DSLRs and cameraphones in a supporting role, while other manufacturers have embraced it as the fundamental basis for equipment. Recently, I chatted with the team from Algolux about how they’re tackling some of the most relevant problems in photography to enable a future in which software and hardware work more in tandem than ever before.

Based in Montreal, Algolux was started in the tech incubator TandemLaunch, from which it spun out as an independent company in 2014, receiving series A financing and narrowing their focus on computational photography (for which they currently hold six patents: four granted, one pending, and one provisional). I spoke with Paul Boucher, VP of Research and Development, and Jonathan Assouline, Head of New Technology Initiatives, about the state of computational photography and what the future holds.

Reimagining Image Processing

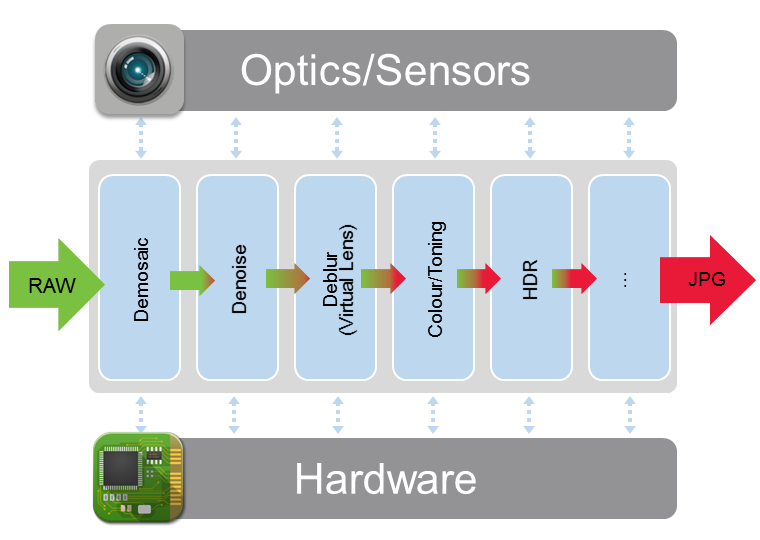

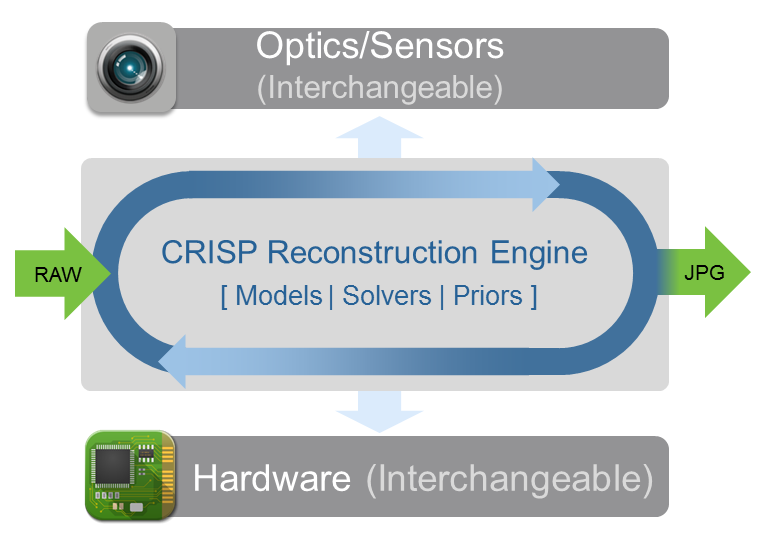

The current image processing environment is a bit fragmented, with different steps handled at different stages and often optimized by different manufacturers. This means that all the parameters have to be reexamined for each new combination of camera body and lens. The core technology behind Algolux’s computational approach is the CRISP engine (“Computationally Reconfigurable Image Signal Platform”). It seeks to place all of these image processing steps into one framework, thus optimizing them to work in tandem and minimizing the compounding effect of errors that propagate across discrete steps.

Because the process iterates based on the raw data instead of referring to it only at the outset, there are no compounding errors. Each iteration of the algorithm refers back to the original data, rather than carrying through computed results. Computer math is often imperfect, as computers cannot store infinitely long decimals. Thus, errors are often introduced when the decimal is truncated — errors that can propagate and grow if not carefully handled. Check out the diagrams below.

The idea is that by moving the linear imaging pipeline into a consolidated and software-driven framework that optimizes all aspects of image processing simultaneously, specific aspects of the process can be focused on and improved independently. This reduces the need for testing and calibrating hardware, while optimizing overall image quality by optimizing all parameters and sub-processes simultaneously. It’s simpler than making small adjustments to independent steps, the conventional method used for hardware-based ISPs. Furthermore, updates can be pushed with relative ease, allowing continual improvement to existing products and quicker implementation of new features.

This also means the optimization of the various procedures can be changed and improved in real-time with real data. Turning ISP optimization into a formula opens the door to a self-learning, data-driven approach to ISP tuning, effectively replacing what is currently a very costly step-by-step approach.

As we move into an age where quality optics will be increasingly augmented by quality computation, software is becoming just as important as its accompanying hardware. Algolux really sees the CRISP approach as the future, noting that by 2018, likely half of all smartphones will contain a computational camera. Check out some sample images from CRISP below.

A Computational Method to Remove Lens Blur

One of Algolux’s first applications of CRISP is Virtual Lens, an algorithm designed to reduce optical aberrations, lowering hardware costs and improving overall image quality. Mathematically and computationally, this is a tough problem, one that centers on a deep understanding of point spread functions. In optical theory, the point spread function is a fundamental concept that describes how an optical system responds to a point source, literally how it spreads the point in its rendering. An ideal optical system renders a point as just that, a point. Losses in sharpness, resolutions, and other aberrations can be encoded by the behavior of this function. In other words, if one knows just how a system handles a point source, one can theoretically restore the point. In mathematics, we call this an “inverse problem,” because we are taking results and calculating causal factors, rather than calculating results from known causes. Inverse problems are notorious for being particularly nasty.

In particular, estimating the point spread function is especially difficult. Not only does each lens exhibit its own point spread behavior, each individual lens has its own individual signature (manufacturing tolerances), which is really where Algolux’s job comes in. There’s a balance between many factors here; in particular, one must estimate the point spread function with enough precision to make meaningful corrections while balancing computational complexity. The idea is to constrain the deconvolution, the mathematical process that returns a representation of the true image — the more information, the stronger the constraints, and the more accurate the output. By using a very good point spread function before beginning any computations, we can greatly improve final output quality and reduce computational complexity, a huge issue for mobile platforms. In particular, CRISP pre-conditions the problem by mathematically guiding the process toward a solution that represents statistical models of natural images.

A further complication is that the point spread function is not uniform across a lens; it varies at different parts of the image circle, meaning one must measure and map the behavior across the entire lens, rather than simply measuring a single point source in the center. Typically, an image scientist will take a picture of a noise pattern and attempt to determine blurring characteristics from that. The idea and the math behind it are relatively well understood; it’s the practical issues that cause complications. Lens designs are very complex., real-world pieces of glass are not perfectly manufactured, and mobile hardware has its own limitations.

There’s little doubt that software-based solutions are going to play an increasingly involved role in the future of photography and optics, whether that be in mobile cameras, DSLRs, medical imaging, astrophotography, industrial imaging, or a myriad of other realms. Computational photography sits at the leading edge of this new paradigm; it’s a complex synthesis of math, physics, and computer science, but with it comes the possibility to both augment and supplant traditionally hardware-driven processes, improving results, reducing costs, and increasing speed of development — all things any photographer would be happy to embrace.

Check out Algolux's website here.

5 Comments

Interesting. Seems the mobile hardware problem can be at least partially resolved using a cloud infrastructure. Raw + EXIF data is uploaded, a supercomputer crunches it, and a JPEG is downloaded. That leaves transfer speed as a fairly significant bottleneck, but would allow updates to the computing engine without even pushing anything to the customer.

Yes, transfer speed is one bottleneck cause camera should be supposed to come with network. Another issue is that point spread function is signature of each phone, to remove lens blur, precalculation is necessary. This will also be an issue since point spread function is specific to each phone. if point spread function itself is not accurate, there is no way to remove lens blur

At first glance, interesting. However, after careful checking sample images. Not that good. Actually, you may find thousands of similar algorithms to solve denoising and deblurring problems. Noise and lens blur are removed to some degree, however, it looks like that these processed images are washed out. Some nice sharpness or rich detailes are also removed. I guess this is due to the fact that in the numerical optimization problems, no optimal solution exists. What we get is always suboptimal. They cannot distinguish noise, artifacts from some useful sharpness.

Interesting article. Though the tumbnail is a bit misleading - I didn't find it confounding at all... ;-) Ok, I got my one math pun out of the way today. Back to work.

Good read. I can add this to my formula. But I'm using a different approach for all of my editings. The lens blur is a good tip. Thanks for sharing.