Researchers have developed a new pixel design that has the potential to revolutionize dynamic range in cameras.

The design is referred to as a "self-resetting pixel." Currently, when a pixel hits the limit of its exposure (becomes saturated), it clips, placing a hard upper limit on highlight capture. This is what we see as clipped highlights in images.

The new pixel design obviates this problem by resetting itself. In other words, when a pixel reaches its well capacity, it resets and begins counting again. It also contains a conventional ADC, which allows it to measure the charge after the last reset at the end of the exposure. From there, the total exposure is simply the number of resets multiplied by the well capacity plus the remaining charge measured by the ADC. In principle, this removes any upper limit on dynamic range, meaning one could expose for shadows or their subject without ever worrying about blowing highlights.

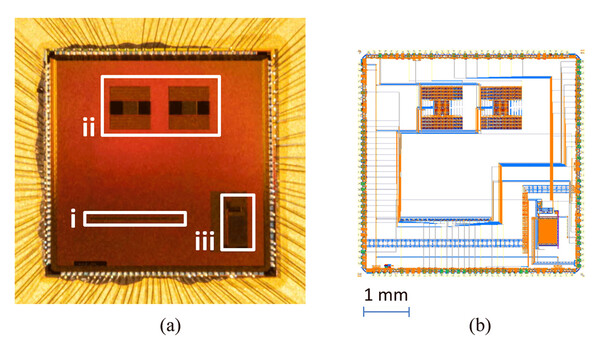

That being said, while the technology is certainly exciting, it still has some hurdles to overcome and is currently being developed for industrial applications. Most notably, the extra circuitry takes up a lot of space, thus decreasing the light-sensitive area of each pixel by a notable amount. Still, it's a promising step forward that could represent huge gains in the future. You can read the full paper here.

7 Comments

This is the type of pixel-level sensor advancement that we really need.

I think that the only way we are going to get "perfect" digital images in challenging shooting conditions is when they can get each and every pixel to "think for itself", and not just have input applied en masse to large groups of pixels.

Think of how wonderful our image quality would be if every single pixel had its very own color filter array! That's right - an entire color filter array assigned to just one pixel! If this were ever developed, there would be no such thing as noise, at all. And each pixel would accurate values for all three color channels. I'm not talking about the Foveon kind of stuff - that is nowhere near good enough. I literally mean an entire full-fledged CPA for every single pixel.

Someday! Probably not within the next 30-50 years, but someday, hopefully, our children's grandchildren will benefit from this type of technology. Or at least one can hope that they will.

"Most notably, the extra circuitry takes up a lot of space, thus decreasing the light-sensitive area of each pixel by a notable amount". Haven't they heard of Sony BSI technology? Just messing.

Arguably, the size of the light sensitive area becomes less important since the circuitry can measure a *practically* infinity amount of photons.

I think it means they can't fit as much pixels in the same size sensor which would spell less mpx for more DR.

Doesn't that also mean we could do without ND filters with this tech? Expose and keep the shutter open for as long as you want, then adjust exposure by -30 stops (probably would have to be done in-camera, before writing raw to avoid numeric range issues) and you're done.

Yes, if the individual pixels were programmed to never record a color value of 240, then there would always be color information in every pixel, and no highlight would ever be "clipped", or unrecoverable.

Come to think of it, there is no reason that such programming couldn't be done for dark pixels, either.

I think that someday in the distant future, we will be able to set both the upper and lower limit for color values, meaning that no area of an image will ever be darker than we want it to be, and no area will ever be brighter than we want it to be. We would be able to make perfect HDRs with just one exposure, and get the image quality we now get when we blend several exposures together.

The thing that baffles me is why this is not available already. It requires only programming, and the processing power necessary to facilitate that programming. Simply writing a program that tells each pixel what range its color values must fall within is all it would take.

I'm no expert, but I believe it is not available now as currently the photosites are passive -- exposed, then read. This new approach requires them to have some autonomy -- the photosites need to reset themselves and count the number of such resets.