Resolution, bit depth, compression, bit rate. These are just few of the countless parameters our cameras and files have. Let's talk about bit depth here. There's a lot of good talk about 10 bit and a lot of bad talk about 8 bit. The computer can tell the difference, but can you?

What Is Bit Depth?

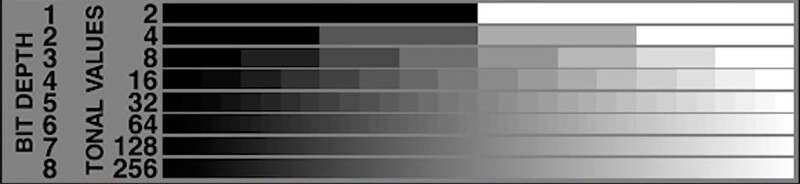

Bit depth determines the number of colors that can be stored for an image whether it's a still picture or a frame from a video footage. Each image is composed of the basic red, green, and blue channels. Each channel can display a variety of shades of the appropriate color. The number of shades determines the bit depth of the image. A 1-bit depth image means there are only two color shades per color channel. For a 3-bit depth image there are two to the power of three shades, or a total of eight shades per channel. An 8-bit image means there are two to the power of eight shades for red, green, and blue. This is 256 different values per channel. When combining those channels we can have 256 x 256 x 256 different color combinations, or roughly 16 million. A 10-bit image can display 1,024 shades of color per channel, or billions of color combinations.

Don't get confused with the 24-bit color. A color is represented by these three basic channels (excluding the alpha channel as we just talk about color, not transparency). The color bit depth is the sum of the bit depths of each channel. A 24-bit color means each color channel can have 8-bits of information.

What's the Bit Depth of Most Media Devices?

The majority of displays on the market are displaying images with 8-bit depth, whether these are desktop monitors, laptop screens, mobile device screens, or media projectors. There are 10-bit monitors too but not many of us have those. If you are curious: the human eye can recognize about 10 million colors.

What's the Point of Using 10-Bit Images?

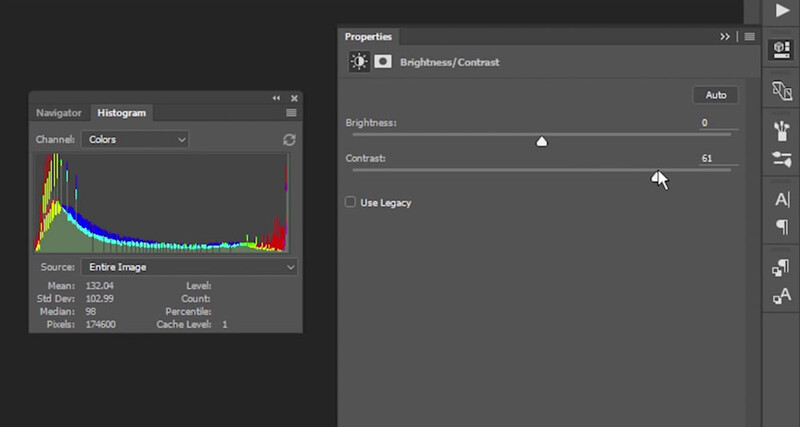

As we see, neither our eyes, nor most of our displays can show us the glory of the 10-bit images. What's the point of having so much data we can't see? For displaying there's no use at all. Even if the devices can interpret that vast amount of data, our eyes won't tell the difference. The only advantage is when processing that data. If you have an 8-bit image and you want to stretch the saturation or contrast more evenly for some reason, the processing software may not have enough data and "tear" parts of the histogram. As a result blank bars of missing data are formed. If there is more dense data to work with, expanding the range would not cause such gaps.

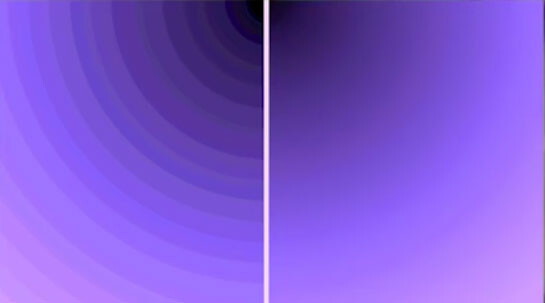

As a result we have the so called "banding" where on the right it is the original gradient and on the left is the "stretched" color spectrum:

This is the reason why it's so important to be more precise when shooting 8-bit images (like JPEG) or 8-bit video (like most of the DSLRs do). Being more precise usually won't call for heavy post-processing. At the end you will have a quality result. If heavy processing is required this is where the 10 or more bits per channel show their advantage. When stretching the values of the pixels the software will have lots of data to work with and thus produce a smoother result of high quality.

Working with 8-bit still images or 8-bit video footage is not bad unless you plan to do a vast amount of color or contrast changes. Being a precise shooter is always paying off, but there are times when you might need higher bit depth (or "deeper bit depth") files.

Conclusion

Raw still images are files of 12, 14, or 16-bit depth. Now you know why you can change the white balance or work with saturation, vibrance, and contrast without degrading the quality than applying changes over 8-bit JPEG files. It is the same for video. Most of your DSLR video is 8-bits per channel and you have to make your picture as best as possible in-camera, otherwise post-processing may lower the quality of your final product.

For more great tech related tips, go to ThioJoeTech's YouTube channel.

If you'd like to learn how to make your own videos and don't know where to start, check out our filming and editing tutorial, Introduction to Video. If you purchase it now, you can save a 15% by using "ARTICLE" at checkout. Save even more with the purchase of any other tutorial in our store.

13 Comments

I got my hands on a GH5 and I did some tests with 8 and 10 bit. I can't see a difference between the two, even when I do extreme exposure edits. Does anyone have any idea why?

Can you send raw files of both? Did you shoot in a flat color profile or the straight out of camera pre-graded footage?

I may put the raw footage online for everyone. I think for that particular test I was shooting in "standard"

Lee, I'm sorry I don't know why but I am curious what your thoughts are on the GH5 for your video work on the new Elia tutorials. How did it compare to your Nikon DSLR for stills? I know you guys were pumped about the in-camera time lapse feature - verdict?

I didn't have it for the elia tutorial I just got to use one that was in Dubai for a couple of days. We did a bunch of tests with it and it's pretty amazing. The footage looks great. The audio sounded great with a lav, the ISO performance was comparable to our D750, the stabilization with "e stabilizer" is unbelievable. Many people won't ever have the need to shoot on a tripod. Literally my only gripe about the camera (so far) is that the ISO performance isn't close to the Sony A7SII.

We shot almost every timelapse in this tutorial with panasonic LX100s and I have to say they are amazing. Soooo simple to use and no flicker out of the camera. I imagine the GH5 is just as good.

Our first impressions review with test footage from the GH5 should be up next week some time.

Nice, thanks for the feedback! Looking forward to reading the complete GH5 first impressions review soon

Probably the edits weren't that extreme or the footage was not that suitable for easily visible defects. For example if you don't have large areas with gradients you won't have the chance to see lots of banding in the final result. If the adjacent areas in the image are of different colors your eye won't see that there were 251 shades of red instead of 832 from a 10-bit image.

Also, the eye recognizes 10m colors. The 8-bit image has 16m. The banding is the easiest way to see the difference.

Personally, I'm glad there's not much difference. This means we have good enough tools in our hands.

"What's the point of having so much data we can't see? For displaying there's no use at all." This couldn't possibly be more wrong. I'm not going to go into details on why it is so very, very wrong, but suffice it to say that anyone who has seen visible banding in a blue sky or any other subtle gradation knows that the human eye can EASILY discern more than 256 luminance steps at once, and when you spread smaller level transitions across large spatial transitions, the limitations of 8-bit become even more painfully obvious.

Most of the time you see the effect of the compression, not the bit depth. You'd see the banding on your raw files on your 8-bit screen as well.

If you have noticed, when working in Photoshop (at least I can confirm that on CS6) there's banding even when working on raw files, because the application compresses the previews you see so that it works fast on different zoom levels. Once you save the file as a high quality JPEG the banding goes away (8-bit image).

I'm having a bit of a problem understanding the following excerpt:

A 2-bit depth image means there are only two color shades per color channel. For a 3-bit depth image there are two to the power of three shades, or a total of eight shades per channel. An 8-bit image means there are two to the power of eight shades for red, green, and blue.

--

For a 2 bit image, the possible values are 00, 01, 10, and 11. The statement for 3-bit and 8-bit is correct. The image below the paragraph shows four distinct shades for 2-bit depth.

I've been working with powers of 2 since 1972 as a computer programmer. After 65,536, I have to pull out my IBM "green card", which they changed to "yellow".

Hahaha. Great catch Ralph. Yes, 2^2 is 4, of course. It's a mistake. I'll correct it.

Pretty basic information offered in this article. One would think that most photographers reading this kind of site would know all that. Still useful for those that don't.

The biggest impediment to image and video quality is still the human that creates and processes it. As a video buff with a large collection of movies on disc, it never ceases to amaze me how bad and wildly varying video quality can be, and these are supposed to be professionals putting that content out.

There are lots of newbies on the site, as well as lots of myth believers. This article covers both parties.

As of video quality, yes, it varies and sometimes it doesn't matter much when the story is great.