The reviews on Intel’s 12th Gen Core desktop processors, named Alder Lake, have all come in, and the results are surprising. Whether you’re looking to upgrade your computer or just want to stay up to date on tech trends, you’ve got to see how these chips performed.

Puget Systems, a high-end custom PC builder with a focus on professional workflows, has always been one of my favorite authorities on testing. Unlike many tech reviewers, who emphasize gaming performance in their testing, Puget focuses on professional applications and suitable testing conditions for real-world performance (i.e. not overclocked).

The 12th Gen processors from Intel come at an important time for the brand. AMD’s 5000 series chips have proven to be monsters in both IPC and thread count, yielding great results in both lightly threaded and highly parallel-izable tasks. With 12th Gen, however, Intel has made progress on both fronts. Core counts have gone up, with even i5 chips offering 6+4 cores (more on this odd architecture in a second). Meanwhile, the top end 12900k now has 8+8 cores, drawing closer to the 5950x’s 16-core arrangement, at least on paper.

What makes those core counts odd is that Intel has pursued a hybrid approach, combining two different core “layouts” on one chip. 12th Gen cores can be a mix of performance and efficiency cores. The performance cores are larger and draw more power, but offer the best performance in single-threaded applications. Meanwhile, the efficiency cores take up 1/4th the space on the die, but only give up 1/2 the performance over the performance cores, making them a great way to squeeze more multi-threaded performance into the same space. Consider how putting four efficiency cores into one performance core’s “spot” results in the same MT performance as two performance cores.

Testing Matters

Between these changes to core counts, the ever-more complex nature of clock speed boosting, and evolving standards like DDR5 and PCIe Gen 5, real-world benchmarking is more important than ever. Real-world benches can help cut through the marketing noise, particularly when they’re well run and tailored to your workflow.

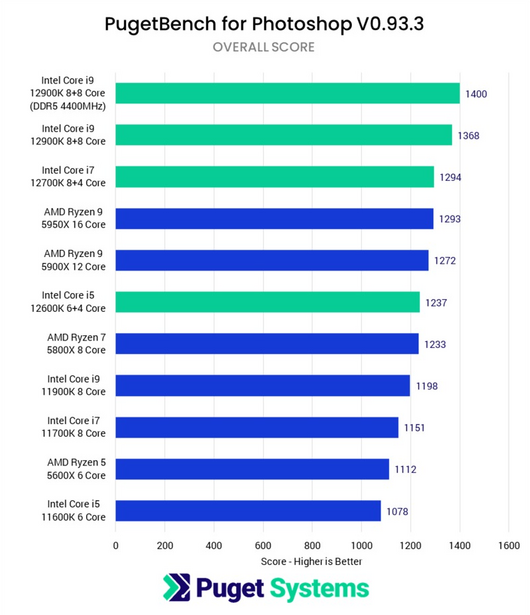

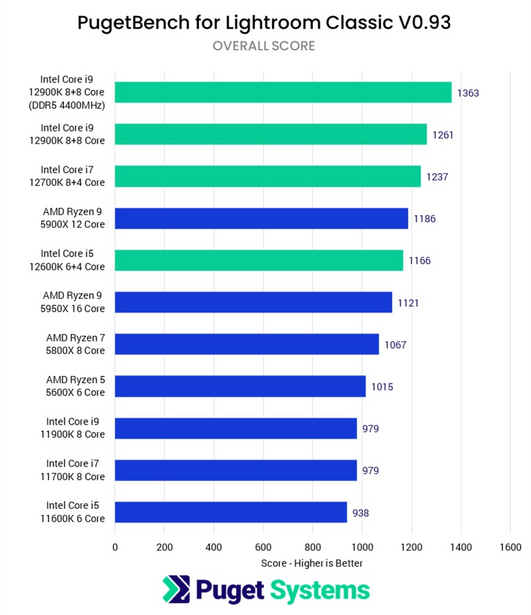

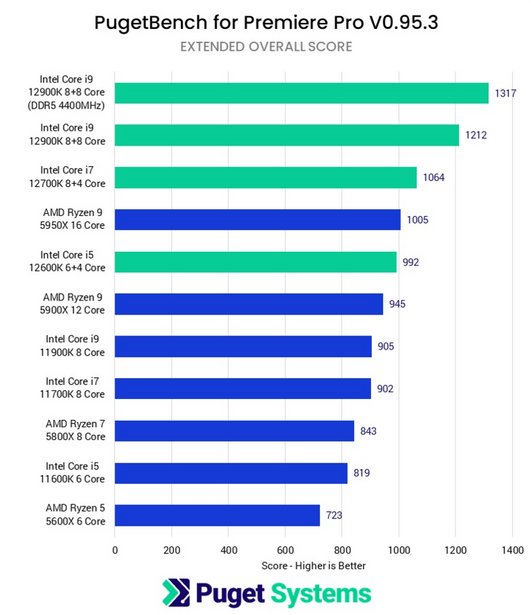

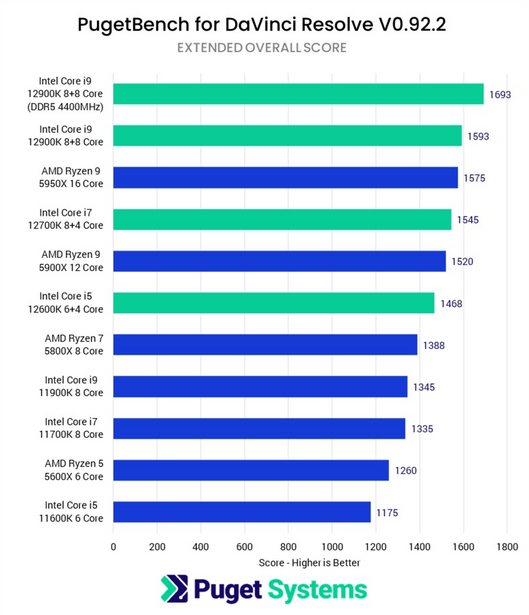

In this case, Puget delivers. Their benchmarking of the 12th Gen CPUs puts them up against AMD’s 5000 series and Intel’s older 11th Gen chips in Photoshop, Lightroom, Premiere, Resolve, Unreal, Cinema 4D, and more. For photographers, I particularly like that their Lightroom and Photoshop testing methodologies are so in depth. They cover importing, library ops, develop module ops, preview building, panoramas, HDR, and exporting; other benchmarks often just cover something easy like exporting, leading to blind spots in the testing.

Puget further separates results for Lightroom into an active and passive metric. Active tasks, like scrolling through the library module or working in development, can greatly impact how your machine feels in day-to-day use, while slow performance in passive tasks like exporting can be brutal for high volume shooters like wedding photographers.

The Results

In both Photoshop and Lightroom, Intel’s 12th Gen chips like the 12900K and 12700K represent the high-water mark in performance. Even the i5-12600K can trade blows with Ryzen 7 and 9 chips. Although these victories aren’t huge, with Intel delivering about 5 to 10% better performance in Photoshop over similarly priced AMD chips, they represent a win nonetheless.

In Lightroom, an important result comes from the 12900K. While the 12th Gen chips can use both common DDR4 memory and the newer DDR5 memory, only Lightroom yielded a significant performance difference when working with photos. The 12900K, with DDR5, came in 15% above the previous winner, the 5900x. The 12700K and 12600K also yielded about 15% performance improvements over the 5800x and 5600x respectively.

For video users, the choice of chip comes down to whether you’re editing in Premiere or Resolve. Premiere gave a 25-40% performance lead to Intel over similarly priced AMD chips, with the odd note that Windows 11 yielded 8% slower results for the 12900K over Windows 10. Regardless of that bug, the difference in performance was so great that the i5-12600K was able to score similarly to the 16-core 5950x, a very surprising result.

On the Resolve side, the performance is less startling. Intel’s top-end chips still sit on top, but the performance-per-dollar gaps are closer to 10%. The i5 does still stand out as a low-priced powerhouse for video work, though.

Of note is how this generation also brings a number of opportunities for improvement in these results. As the first real hybrid architecture for Windows, there are several areas ripe for optimization. Thread scheduling, the process by which work gets assigned to the actual cores, is one of the clearest areas. Additionally, Windows 11 offers a big performance bump in Photoshop, with Puget noting a 28% improvement over Windows 10 in their testing. If you’re not interested in moving to 11 right now, this can be a huge factor.

Also, the expected improvements in DDR5 memory, including both price and performance, can be a significant factor. The early modules in every memory generation are more expensive and less performant than modules later in the generation, and DDR5 looks to be the same. This means that holding off just a while on the upgrade, or even rolling over your DDR4 kit can yield performance improvements down the line.

At the lower end, the i5 and i7 chips have a significant lead over the Ryzen 5 and 7 chips, often thanks to Intel’s higher effective core count. At the higher end, the 12900K is the new performance king for both Lightroom and Photoshop, although DDR5 is necessary to open a noticeable gap. As a result, if you’re looking to build a new workstation, you’ll probably want to go Team Blue this time around. If instead of building, you’re looking to get a computer delivered, consider checking out Puget System’s rigs. Their workstations are tailored to real-world workflows like Photoshop and Lightroom, making them a great choice for users who just want a high-performance system, without having to stress over specs.

Header image courtesy of Ralfs Blumbergs.

21 Comments

I feel the Intel CPUs are pretty lackluster these days.

If you compare the 12900K with the Ryzen 5950X - the later is faster and more than twice efficient per watt (in spite of being a year older):

https://www.cpubenchmark.net/compare/Intel-i9-12900K-vs-AMD-Ryzen-9-595…

I've never heard of PugetBench though...

CPUBenchmark is just one of dozens of synthetic benchmarks

PugetBench is one of a handful of real world benchmarks

Real World > Synthetic

No argument Intel is more power hungry, but, for certain applications, you go by what you feel will benefit you. In these Pugetbench tests, Intel is between 7% to 31% faster. And is $110 cheaper.

Just another example why you don't want to rely solely on synthetic benchmarks: my PC with an AMD 3600 has a Cinebench R23 of 9600 points, while my M1 Macbook Air has 7500 points. However, when I render a 5 min 1080p footage with Davinci Resolve, my PC takes about 2 min 40 secs while my M1 takes 1 min 26 secs. The one with the lower synthetic score is almost twice as fast.

Synthetic benching reminds me of when car reviewers do 0-60 MPH speeds. Just because a car is fast with that speed does not mean it'll be fast around a track lap after lap after lap after lap.

Your real word result won't depend solely on CPU. Very far from it actually. When I transcode and render videos in Resolve - my system is using primarily the GPU (RTX 3090), and the CPU is pretty much irrelevant. If the GPU is disabled - other things come to play like RAM speed, SSD speed (your video still needs to be read and written ;), the overall system bus throughtput (different motherboards can produce different results with the same CPU), and finally - supported instruction sets, hardware codecs, and optimization towards particular tasks.

I don't know anyone who's rendering videos relying on an Intel or AMD CPU alone. You might be this person ;) I have some good news too - if you add a decent video card to your PC - it will likely beat the M1: https://forums.macrumors.com/threads/video-render-comparison-mbp-m1-max…

People still transcode when they've got a $2000-$3000 GPU? ;) When I transcode or render on Resolve v17.4.2, my system (PC) uses both CPU + GPU. Depending on what I'm doing or the codecs, it's either CPU 98%+ and GPU 35-67%+ ; or CPU 80%+ and GPU 98%+. The latter rendering h.265 So, yeah, CPU is pretty relevant.

--- "I don't know anyone who's rendering videos relying on an Intel or AMD CPU alone. You might be this person ;)"

Nope, not me. Especially since Resolve requires you to have qualifying GPU. lol :P

I briefly checked a few times a year+ ago - my GPU utilization was pretty high. Out of curiosity I ran it now - a quick rendering of an 8K CRM => 4kDCI h.265 [best] with a curve color correction and nothing else, and this is what I got: CPU < 80, GPU < 100. The source was loading from a large HDD-based RAID5 and rendered to a PCIe 4.0 SSD. Yes, the CPU is definitely in use, and it's more like to feed the GPU with some work :) NVENC has no idea about propitiatory Canon format, so that's likely where the CPU comes to play (decoding). Encoding is done via NVENC for sure.

Anyway... a few percent gain the PugetBench numbers show means nothing if you add a GPU (real world use case), but if you really look at the wattage - it's 105W AMD vs 240W Intel. Quite a difference, don't you think?

--- "but if you really look at the wattage - it's 105W AMD vs 240W Intel. Quite a difference, don't you think?"

Well, why do you think I'm running an AMD 3600. I did a budget upgrade in Dec 2020 from when I first built by PC Dec 2012 running Intel i5-3570K (something like that).

I only have 2 Noctua fans, CPU and rear. After running Cinebench R23 throttle test for 10 mins, it stays cool and quiet. Max temp is 75 c.

The M1 also has a full video coder/decoder that's independent of the CPU or GPU. What you have on your PC depends on the GPU, whether that's a full video rendering pipeline being accelerated or just parts of the pipeline thrown over to the GPU.

Back in the days of Motorola's 68000 it was common knowledge that software only uses about 10-15% of the CPU's real power. Articles like the one above are useless. Such tests do not really measure CPU performance. They just show what kind of software runs a bit faster on a more modern or different CPU.

What would be really interesting is how much power is needed to do the tasks. How energy efficient a CPU is, how many watts per MIPS it uses.

But, what are you, as the end user, looking for? An efficient CPU power wise or the best daily performance you can get for your money? These aren't laptop processors here, so power draw is not a consideration. This comparison is not about all out CPU performance with computational speed and teraflops, but what CPU will show you better performance on a day to day basis using the software that most of the people on this blog use daily? If you're purchasing a new computer, this shows what is going to be faster doing the tasks you normally do (assuming all other components are similar).

This confirms pretty much what has been happening for the past decade, AMD provides a higher spec'd processor for lower money. When you're comparing two similarly spec'd processors, Intel has a lot more performance. When comparing similarly priced processors, the Intel is in the lead - but ever so slightly.

Power consumption IS a factor. If you don't care about ecology, at least you care about fan noise, right? And more power consumption means higher running costs as well.

Intel just scales, TPD goes up again, power consumption goes up. I don't care about 5-10% faster. And I don't care about the manufacturer.

But the software manufacturers could do more than always focus on faster hardware.

If you leave your computer on all the time, 24/7, at least in the USA, the energy cost difference between a 95W TDP and a 125W TDP is roughly $35 per year - and remember that's only if it's running at it's TDP all the time. During idle times, that will be much less. I have never had the fan speed on my desktop be anything more than a quiet hum that is almost imperceivable - even when stressing it under heavy load.

Sure, but you are only one. What if there are millions? Build another power plant? For the other part I suggest you refer to the anwer from Tammie above (RTX 3090).

A single incandescent light bulb in a decently used location will likely use more energy per year than the difference in power consumption between these processors. If you really want to be power efficient, you should only be using laptop processors. Either way, your argument is verging on being pedantic.

Look at all of the synthetic benchmarks, watt per MIPS, whatever you want to for your choice. For the average photography/videography related consumer (and reader of this blog), this test shows the performance of these various CPUs using the software that they use regularly (all other system components being as even as possible). Other system components can cause these performance factors to change greatly, as the lowest rated CPU on this list with a high end video card will likely outperform the top CPU without one. But this isn't a system comparison, just one of CPUs.

I've got to go now as I think I left a battery on a charger for 15 minutes after it said it was done and I don't want to cause a brownout.

If you do not want to or cannot see the deeper connections, we have no basis for further conversation, I am sorry. And if you then also make fun of it, even less so. Enjoy your life.

Technology to make Lightroom fast doesn’t exist yet.

CPU is only part of the equation these days. Owning an Intel Alienware 17, A desktop Ryzen/RTX3070 and a M1 Pro MBP, the winner is the M1 Pro and higher. I love working with huge workloads on an unplugged M1 MBP on stress-free locales like cafes and your outdoor garden…

The M1 is very impressive for the form-factor, but the 3080/5950x style of hardware just has more horsepower when you don't need it to be laptop portable. Also, there's still a few bumps with more specialty programs and ARM.

I'm staying with AMD - due to the fact Intel has been a multiple convicted monopoly-abuser - and for what i need - the AMD cpu's and mainboards give me more bang for the buck. Speed is relative - what matters is that all components are working well together at a reasonable cost. Look at the performance/energy consumption and you'll get other results. Another issue i have with Intel is the rapid change in chipsets & sockets, coollers and so on. With an AMD platform the mainboards are cheaper - and last an extra generation. I can plug in a 4th gen. cpu into a 2nd gen. mainboard - can we do that with an intel platform where almost every gen. has a new mainboard, new socket and chipset?

Those looking for top performance should have a look at the server-chipsets and cpu's - and get away from the top-line of desktop cpu's instead - to have more memory banks - and have more PCI-e lanes for I/O.

OMG 400 (Artificially meaningless) POINTS FASTER!? IM RUSHING TO UPGRADE!

.

Here is the reality. Even if you own a Gen 2 i5 2400 you have more than enough processing power to run photoshop at full performance when not using filters.

.

If you are using filters, you will see extremely microscopic improvements in performance if you have anything over Gen 6 Intel or Gen 3 threadripper.

.

In other words, CPU’s from 7 years ago are powerful enough to run even the most demanding tasking of Photoshop in real time.

.

You know what will give you actual performance increases? An SSD to load Photoshop in under 10 seconds. 16-32GB ddr4 ram to process in real time without having to max RAM or unload resources.

.

Other than that, the only think that will increase performance is PCIE Gen 5. And only if you are attempting to work with larger than 10K native files Raster files. Vector requires even less power

.

Photoshop is not a game or a 3D rendering program like Maya, Blender, Untiy, unreal etc, that is at the height of what technology offers and gobbles up performance. It’s a pixel based editing program. Relax.

Looks like Intel has (finally) caught up with AMD. Time for AMD to release their new 7000 series CPU's.

Puget and most other reviewers measure the irrelevant vs real world for Adobe users, assuming we are looking for "hard core" users.

Almost all Hard core users will move back and forth between lightroom and photoshop (and perhaps other adobe apps or, say, excel and outlook).

The "time" where improvement is important and should be measured is a set of "round trip" benchmarks. This is where pain and progress are found. All these existing "benchmarks" are useless arm waving. The real metric, the one that is needed, is when single-thread efficiency and multi-threading efficiency hit the road together. Round tripping (Ask around if you do not understand....) leads to memory leaks and other problems, and then...... sluggishness and crashes.

Puget benchmarks these largely invariant, "within" app, unstressed, metrics. These are the "trees" but they say nothing about the "forest".

At some point computer buyers are going to turn to someone who can really tell them if Intel, AMD, and the motherboard makers are offering real improvement in day to day usage. (Anybody?? anybody? per Ferris B's teacher)

Perhaps I should not single out Puget since the pundits/writers are also guilty of these exercisees in irrelevance.