For many centuries, scientists fought vehemently about the nature of light. Two sides debated a question pivotal to the development of physics: is light a particle or a wave? It wasn't until the 20th century that one of the most startling revelations about our universe came to prominence: light is both.

We see the wave-particle duality in photography every day. Exposure is governed by the particle nature of light, namely how your shutter speed and aperture dictate how many photons reach your sensor and how your ISO dictates how sensitive the sensor is to those photons. On the other hand, the wave nature of light determines such characteristics as color (my girlfriend is particularly fond of a certain wavelength around 490 nm).

Bending Light

One particularly fascinating property of the wave-like nature of light is diffraction. When light passes through an opening, it bends near the edge. The smaller the opening, the more pronounced the bending. This is an issue to photographers because light rays that are bent and separated are no longer focused when they reach the sensor. As a point of light passes through a lens, it should be focused to a point of light on the other side; when it isn’t, it creates the aptly named circle of confusion, which contains all the rays of light corresponding to that point and is a measure of the maximum allowable error given our eyes’ ability to resolve detail. This isn’t all, though. Light that is bent can now be out of phase and will interfere with itself, creating a regular pattern of cancelled and amplified luminosity, called an Airy Disk, named after its discoverer.

However, diffraction is not always an issue. If the diameter of the Airy Disk is relatively small as compared to the circle of confusion and pixel size of the camera, diffraction isn’t an issue. The pixels are simply too big to catch the relatively small error. On the other hand, when the size of the Airy Disk is of the order of the circle of confusion or the pixel size, diffraction becomes noticeable.

There are two ways to increase the effect of diffraction: reduce the size of the hole the light must pass through (i.e. close the aperture) or increase the ability to resolve fine details (i.e. reduce the size of individual pixels). This results in a tradeoff: higher resolution cameras with smaller, more tightly packed pixels have less leeway in their ability to tolerate smaller apertures. Thanks to anti-aliasing filters and the Rayleigh Criterion (which states that two disks must be closer than half their width to become unresolvable), Airy Disk diameters can typically be between 2 and 3 times the diameter of an individual pixel before issues arise.

The Canon 5DS

When Canon first announced the 5DS and 5DS R, with more than double the resolution of the 5D Mark III, I had many thoughts ranging from “I’m going to need a lot more RAM” to “those are some small pixels.” In fact, the 5DS has a pixel pitch of 4.14 microns, versus the 5D Mark III, which has a pixel pitch of 6.25 microns. From a purely physics standpoint (advancements in camera technology aside), this means the 5DS will not perform as well in low light (thus, its maximum ISO of 6,400, compared to 25,600 on the 5D Mark III), but of course, the 5DS is truly built for studio and landscape work.

Both studio and landscape photographers demand the utmost level of detail in their work. Often, this means using small apertures to ensure that the entirety of the image is sharp and no detail is lost. With an over twofold jump in pixel count on the 5DS, this could be a potential recipe for diffraction issues. On a 5D Mark III, the size of the Airy Disk begins to exceed the size of the circle of confusion just after f/11. This means the 5D Mark III reaches its diffraction limit at that point, the point at which diffraction begins to become visible when viewing an image at 100% at a typical viewing distance. This is different from the diffraction cutoff frequency, the point at which airy disks completely merge and no amount of stopping down will improve resolution. Think of the space between the diffraction limit and the cutoff frequency as the space of diminishing returns. On the other hand, the 5DS reaches its diffraction limit just before f/8, slightly over a full stop sooner than the 5D Mark III. This might have landscape photographers and those who rely on having a large depth of field worried.

Should you be worried? Absolutely not. There is a key assumption that went into these calculations: we have a perfect lens, in which diffraction is at its minimum wide open. In order to speak only about the effect the camera sensor has on diffraction, we had to remove another variable: the lens. Of course, no lens is perfect. The truth is that even though we have some spectacular modern lenses, no lens is a perfect optical instrument and in practice, lens aberrations always overwhelm the effects of diffraction for the first few f-stops; otherwise, we would never stop down a lens to increase sharpness. All other variables being equal, a 50.6 megapixel sensor will always show more detail than a 22.3 megapixel sensor.

What this does mean is that if you’re someone who is used to balancing depth of field and sharpness reduction from the effects of distraction, you should take a bit of time to recalibrate where you make the tradeoff if you’ve ordered a high megapixel camera. You might find that because of the diminishing returns that begin at a wider aperture, you would prefer to open your lens an extra stop and increase either your subject distance to compensate for depth of field or focus a bit farther out to maintain your hyperfocal distance.

33 Comments

This article made my head spin.

Interesting article. It's nice to know there's another fellow PhD student out there who desperately needs some creative experiences :)

Thank you, Scott! I think creativity is so vital to staying balanced! :) What's your area of study?

I hear ya. For me it's agricultural communications

Thanks for keeping it nerdy, Alex! :) We need more of the math side of things around here!

Totally agreed

I'm always happy to increase the nerdy factor, Aaron! :)

Numbers don't lie and i miss science and physics :), i wonder whos that idiot who gave a thumb down to Aaron, Why!!!!.

They hate us 'cause they anus. ;)

Great insight man!

Thank you, Peter! I really appreciate the compliment!

For those who are terribly worried about higher pixel density leading to higher diffraction, you can always make the leap to medium format.

Hey man, can i borrow 30k?

You sound Jaded that your business hasn't brought you to that level.

A humorous comment about a thing is not always to be perceived as criticism. Although in this day and age 10-12k max is what i think would seem fair for a medium format back. 30k is too much to charge for such a system, no matter how much you make.

Well that was a tough read.

Thanks for the awesome article, Alex! I've studied light theory for a while now, and some of these ideas help me better understand it!

Thank you, Michael! I think it's such a fascinating topic.

I enjoyed this article a lot, thank you!

Thank you, John! I'm so glad you enjoyed it!

Does just looking at resolution from a pixel count stand point make sense? I thought not?

"{...When Canon first announced the 5DS and 5DS R, with more than double the resolution of the 5D Mark III,...}"

Hi Michael,

Camera sensor resolution is always measured in absolute magnitude, not in pixels per unit distance as in displays.

I guess thats my point, you mentioned "sensor resolution" (also understood to be pixel resolution) in your reply, yet in the article its just "resolution". At the risk of placing too much value on semantics (not a big risk as this article is very technical) you say "more than double the resolution". I was always led to believe we should understand "resolution" to be based on a number of factors, some that are obviously completely independent of "sensor resolution" or pixel count.

Again I am not an expert by any means, I am just curious what your take is on the term "resolution" and how you used it.

Hi Michael,

I think there's a contextual argument here. Resolution is the perceptive sense is influenced by a multitude of factors, but in this case, I was referring to the total pixel count of the two cameras, which is referred to as a camera's "resolution." The word definitely has multiple nuanced meanings depending on its usage.

A while back Steve Perry made a good video on this very subject, which you can view here:

https://www.youtube.com/watch?v=N0FXoWdHXTk

Basically you shouldn't lose too much sleep over it, although of course you should to keep it in mind.

In studio photography today you have the option of shooting in stack focus, assuming you have the time and budget appropriated. I recently shot three product shoots like this because the client wanted his products (all shot in macro) in perfect focus front to rear. This allows you the luxury of shooting at optimum aperture, getting spectacular results from any camera.

Even if your shot suffers from diffraction, the use of a deconvolver will compensate for it either somewhat, or completely, depending on the severity of it. Most people don't realize that Photoshop's SMART SHARPEN is a basic deconvolver that works fairly well at sharpening before the onset of ringing artifacts, which occur sooner with processes like high pass, or the Jurassic unsharp masking. It appears Lightroom's sharpening tool is also a basic deconvolver, with the added benefit of a low frequency masking slider.

You don't need to be using a camera like the Nikon D800 or new Canons to deal with this either, this is certainly nothing new in production. I regularly freelance in a studio where Mk IIs and Mk IIIs are used, with products shot at f22 and greater, and I make regular use of smart sharpen on the product shots in post.

I saw it a few weeks ago and googling about the topic I found this article (with kind of a simulator included): http://www.cambridgeincolour.com/tutorials/diffraction-photography.htm

I remember running into this a while back. Good info as well.

I feel like Einstein deserves at least a shoutout in this article :)

I'm not being pedantic but it is important to correct a statement made in this otherwise excellent article. ISO does not "dictate how sensitive the sensor is to (those) photons." ISO does not change sensor sensitivity. ISO is simply a volume control. If you set ISO to say 100 and expose so that some sensor sites are almost completely filled with light (they have collected as many photons as they can), then at ISO 200 you will collect half the number of photons under the same lighting conditions. However, at ISO 200 the volume control is turned up such that it looks like the half-full sites are actually almost full.

I really should have had more coffee before reading this. I may have to try again.

Hi Alex, I'm a photographer and currently getting my MA in physics, and I see two potential problems with your article:

1. shouldn't diffraction be related to physical diameter of the opening of the lens? This is not the same as the f-number. For example on a 600mm lens at f/4 light has to pass through an opening that's 15cm wide, whereas on a 20mm lens at the same aperture the "whole" is only 0.5cm wide. Therefore diffraction doesn't "happen" at certain f-number. The diameter is what is really important, and that is related to both the f-number, as well as the focal length of the lens.

2. A sensor with a smaller pixel pitch will not necessarily perform worse in low light, this is a common photography myth.

The reason is that on pixel-level the size of the space between pixels is far from negligible. This allows two sensors of the same size with different resolutions to have roughly the same overall pixel area. That means that during the same exposure the amount of light that falls onto the pixels will be the same, even though pixel pitch is different. Downsampling both images to the same resolution will show that they both have the same amount of noise.

Physical size of the sensor, as well as technology used are much more important. This is why my Canon 5D classic has as much noise as a D810 at ISO 3200, although the D810 has three times as more pixels - which means much smaller pixel pitch. :)

Hi Marko,

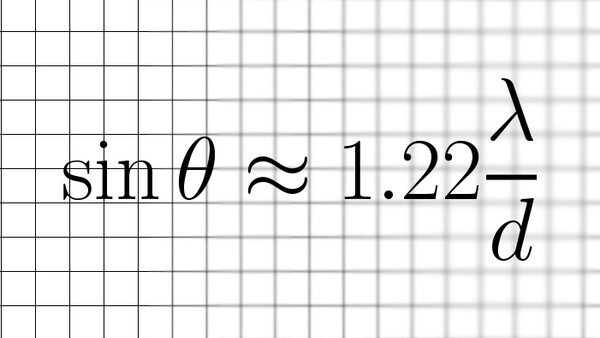

1. I see why you think that, but here's why: Consider two objects close to each other that we're trying to resolve. We have sin(theta) = 1.22 lambda/d, where d is the diameter of the lens. Because theta is small, we can use the small angle approximation and write x/f = 1.22 lambda/d, where x is the lateral separation of the two objects and f is the distance from the lens to the sensor (essentially the focal length). Thus, we have x=1.22 lambda (f/d), but f/d is just the f-number of the lens. Thus, focal length and actual diameter drop out.

2. Yes, I agree that on the pixel-level, the space between pixels is not negligible, which is why I used pixel pitch instead of pixel size. Physical size of the sensor and technology are absolutely important, but for the comparison in this article, the 5DIII and the 5DS have the same sensor size and I qualified it by saying "advancements in camera technology aside." That being said, pixel pitch does influence noise performance, as the signal-to-noise ratio is proportional to the square root of the number of photons collected (which can be modeled by a Poisson distribution); thus, bigger pixels, better SNR (think of the A7s, which has a pixel pitch of 8.40 microns).

Thank you for the reply, I agree completely. :)